The book explores the technical as well as cultural imaginaries of programming from its insides. It follows the principle that the growing importance of software requires a new kind of cultural thinking — and curriculum — that can account for, and with which to better understand the politics and aesthetics of algorithmic procedures, data processing and abstraction. It takes a particular interest in power relations that are relatively under-acknowledged in technical subjects, concerning class and capitalism, gender and sexuality, as well as race and the legacies of colonialism. This is not only related to the politics of representation but also nonrepresentation: how power differentials are implicit in code in terms of binary logic, hierarchies, naming of the attributes, and how particular worldviews are reinforced and perpetuated through computation. Using p5.js, it introduces and demonstrates the reflexive practice of aesthetic programming, engaging with learning to program as a way to understand and question existing technological objects and paradigms, and to explore the potential for reprogramming wider eco-socio-technical systems. The book itself follows this approach, and is offered as a computational object open to modification and reversioning.

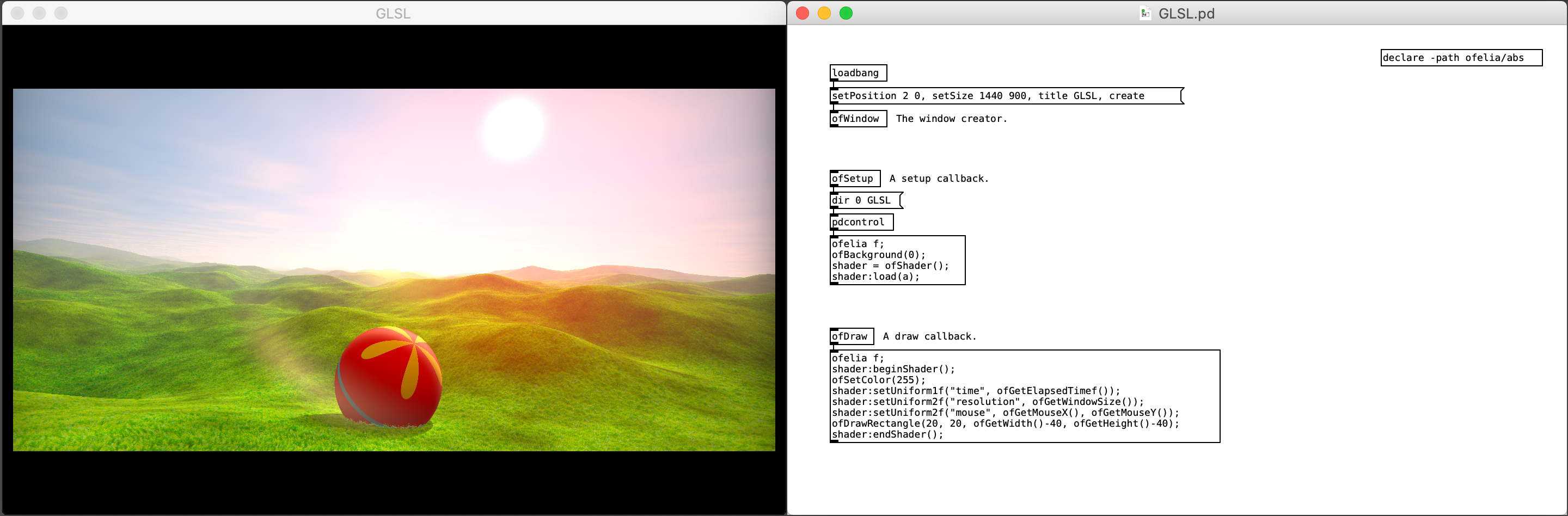

Ofelia is a Pd external which allows you to use openFrameworks and Lua within a real-time visual programming environment for creating audiovisual artwork or multimedia applications such as games.

openFrameworks is an open source C++ toolkit for creative coding.

Lua is a powerful, efficient, lightweight, easy-to-learn scripting language.

Pure Data(Pd) is a real-time visual programming language for multimedia.

Thanks to Lua scripting feature, you can do text coding directly on a Pd patch or through a text editor which makes it easier to solve problems that are complicated to express in visual programming languages like Pd. And unlike compiled languages like C/C++, you can see the result immediately as you change code which enables faster workflow. Moreover, you can use openFrameworks functions and classes within a Lua script.

Using Ofelia, you can flexibly choose between patching and coding style based on your preference.

The external is available to be used under macOS, Windows, Linux and Raspberry Pi.

A free educational site that progressively introduces you to the world of computer graphics.

Our application programming approach guides you through small, easy-to-compile programs.

We’ve dispensed with unnecessary technical jargon in favor of everyday language.

Tooll 3 is an open source software to create realtime motion graphics. We are targeting the sweet spot between real-time rendering, graph-based procedural content generation and linear keyframe animation and editing. This combination allows…

artists to build audio reactive vj content

use advanced interfaces for exploring parameters

or to combine keyframe animation with automationTechnical artists can also dive deeper and use tool for advanced development of fragment or compute shaders or to add input from midi controllers and sensors or sources like OSC or Spout.

We strongly believe in usability and intuitive and beautiful interface design. That's why we experiment with different approaches before striking the right balance between usability and powerful flexibility. Currently tool version 3 is an ongoing development. It's stable enough to produce high-end visuals create motion graphics use many industry standard features like color correction, scopes and tone mapping, and export small standalone executables.

Have you ever wanted to ...

– export 10,000 mass-customized copies of your InDesign document?

– use spatial-tiling algorithms to create your layouts?

– pass real-time data from any source directly into your InDesign project?

– create color palettes based on algorithms?

– or simply reconsider what print can be?

basil.js is ...

– making scripting in InDesign available to designers and artists.

– in the spirit of Processing and easy to learn.

– based on JavaScript and extends the existing API of InDesign.

– a project by The Basel School of Design in Switzerland.

– has been released as open source in February 2013!

Hydra is a platform for live coding visuals, in which each connected browser window can be used as a node of a modular and distributed video synthesizer.

Built using WebRTC (peer-to-peer web streaming) and WebGL, hydra allows each connected browser/device/person to output a video signal or stream, and receive and modify streams from other browsers/devices/people. The API is inspired by analog modular synthesis, in which multiple visual sources (oscillators, cameras, application windows, other connected windows) can be transformed, modulated, and composited via combining sequences of functions.

Features:

Written in javascript and compatible with other javascript libraries

Available as a platform as well as a set of standalone modules

Cross-platform and requires no installation (runs in the browser)

Also available as a package for live coding from within atom text editor

Experimental and forever evolving !!

Cables is your model kit for creating beautiful interactive content. With an easy to navigate interface and results in real time, it allows for fast prototyping and prompt adjustments.

Working with cables is just as easy as creating cable spaghetti:

You are provided with a given set of operators such as mathematical functions, shapes and materials.

Connect these to each other using virtual cables to create the scene you have in mind.

Easily export your piece of work at any time. Embed it into your website or use it for any kind of creative installation.

Welcome to Exploring Technology, a wishful remedy to the increasing knowledge gap between machine builders and machine users.

Learn about :

A-Frame

Arduino

AxiDraw

Bitsy

Cables

Cinema 4D

Circuit

GitHub

Colab

Glitch

Hubs

Hydra

Laser Cutting

Lightform

Lights

Machine Learning

Makey Makey

NFT

Node

Photogrammetry

Processing

Projectors

Raspberry Pi

Resolume

Tone

Spark

Web

Carefully curated list of awesome creative coding resources primarily for beginners/intermediates :

To the extent possible under law, Terkel Gjervig has waived all copyright and related or neighboring rights to this work.

OPENRNDR is a tool to create tools. It is an open source framework for creative coding, written in Kotlin for the Java VM that simplifies writing real-time interactive software. It fully embraces its existing infrastructure of (open source) libraries, editors, debuggers and build tools. It is designed and developed for prototyping as well as the development of robust performant visual and interactive applications. It is not an application, it is a collection of software components that aid the creation of applications.

Key features

a light weight application framework to quickly get you started

a fast OpenGL 3.3 backed drawer written using the LWJGL OpenGL bindings

a set of minimal and clean APIs that welcome programming in a modern style

an extensive shape drawing and manipulation API

asynchronous image loading

runs on Windows, MacOS and LinuxEcosystem

Applications written in OPENRNDR can communicate with third-party tools and services, either using OPENRNDR’s functionality or via third-party Java libraries.

Existing use cases involve connectivity with devices such as Arduino, Philips Kinet, Microsoft Kinect 2.0, RealSense, DMX, ARTNet and Midi devices; applications that communicate through OpenSoundControl; services such as weather reports and Twitter. If you want to experiment with Machine Learning, try RunwayML that comes with an OPENRNDR integration.

A platform for interactive spaces, interactive environments, interactive objects and prototyping.

tramontana leverages the capabilities of the object that we have all come to carry with us anywhere, all the time, our smartphones. With libraries for Processing, Javascript and openFrameworks you can access the inputs and outputs of one or more smartphones to easily and quickly prototype interactive spaces, connected products or just something you’d like to be wireless. What used to involve complex tasks like networking, native app development, etc. can now be created with a single sketch on your computer.

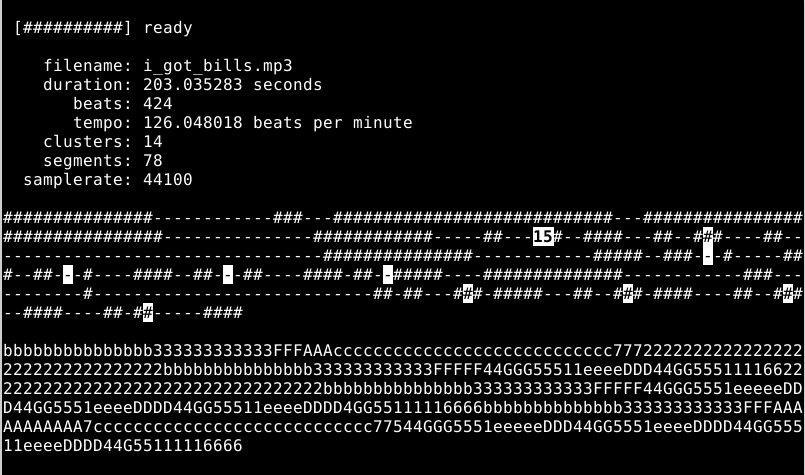

Creates an infinite remix of an audio file by finding musically similar beats and computing a randomized play path through them. The default choices should be suitable for a variety of musical styles. This work is inspired by the Infinite Jukebox (http://www.infinitejuke.com) project creaeted by Paul Lamere

It groups musically similar beats of a song into clusters and then plays a random path through the song that makes musical sense, but not does not repeat. It will do this infinitely.

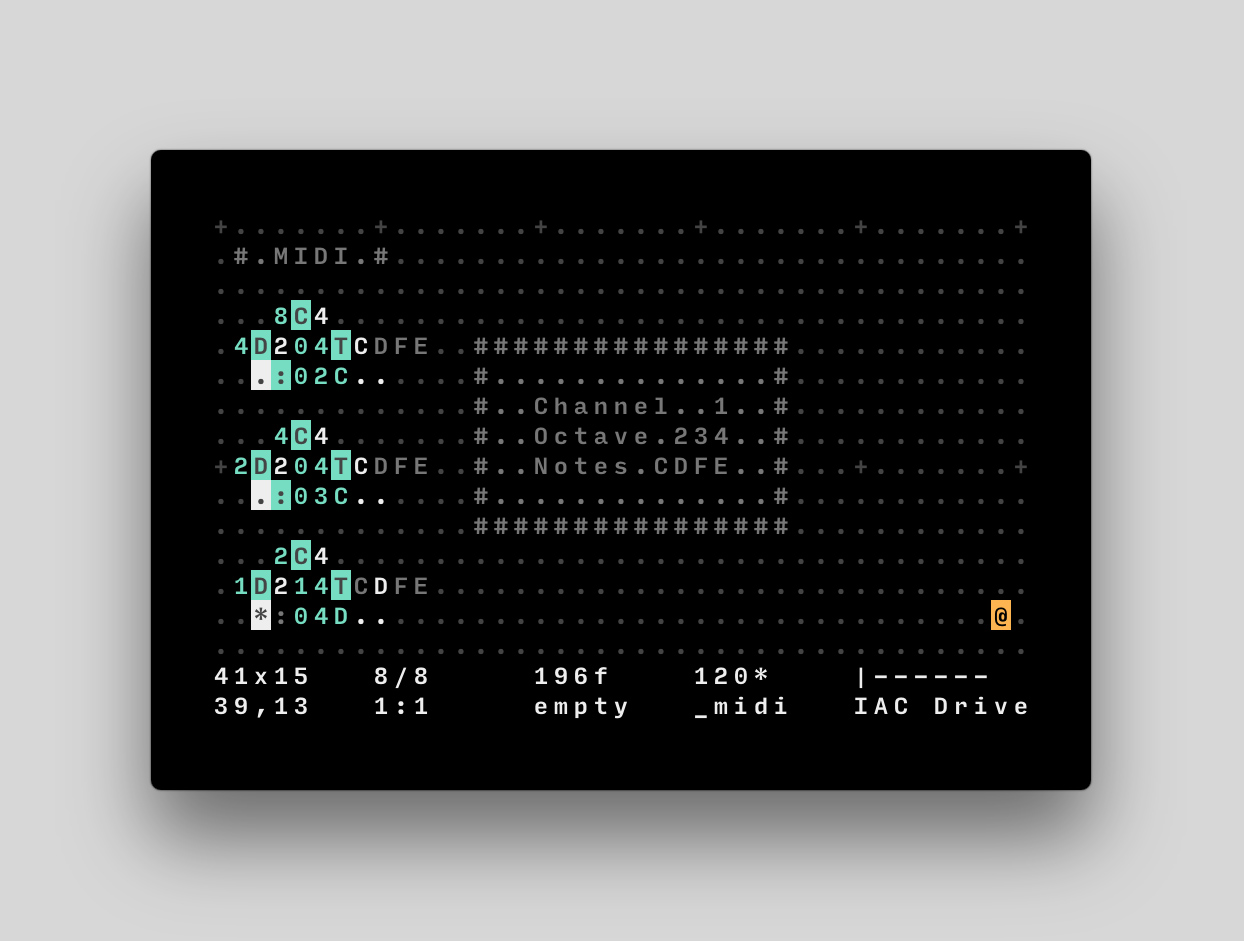

Orca is an esoteric programming language designed by @hundredrabbits to create procedural sequencers.

This playground lets you use Orca and its companion app Pilot directly in the browser and allows you to publish your creations by sharing their URL.

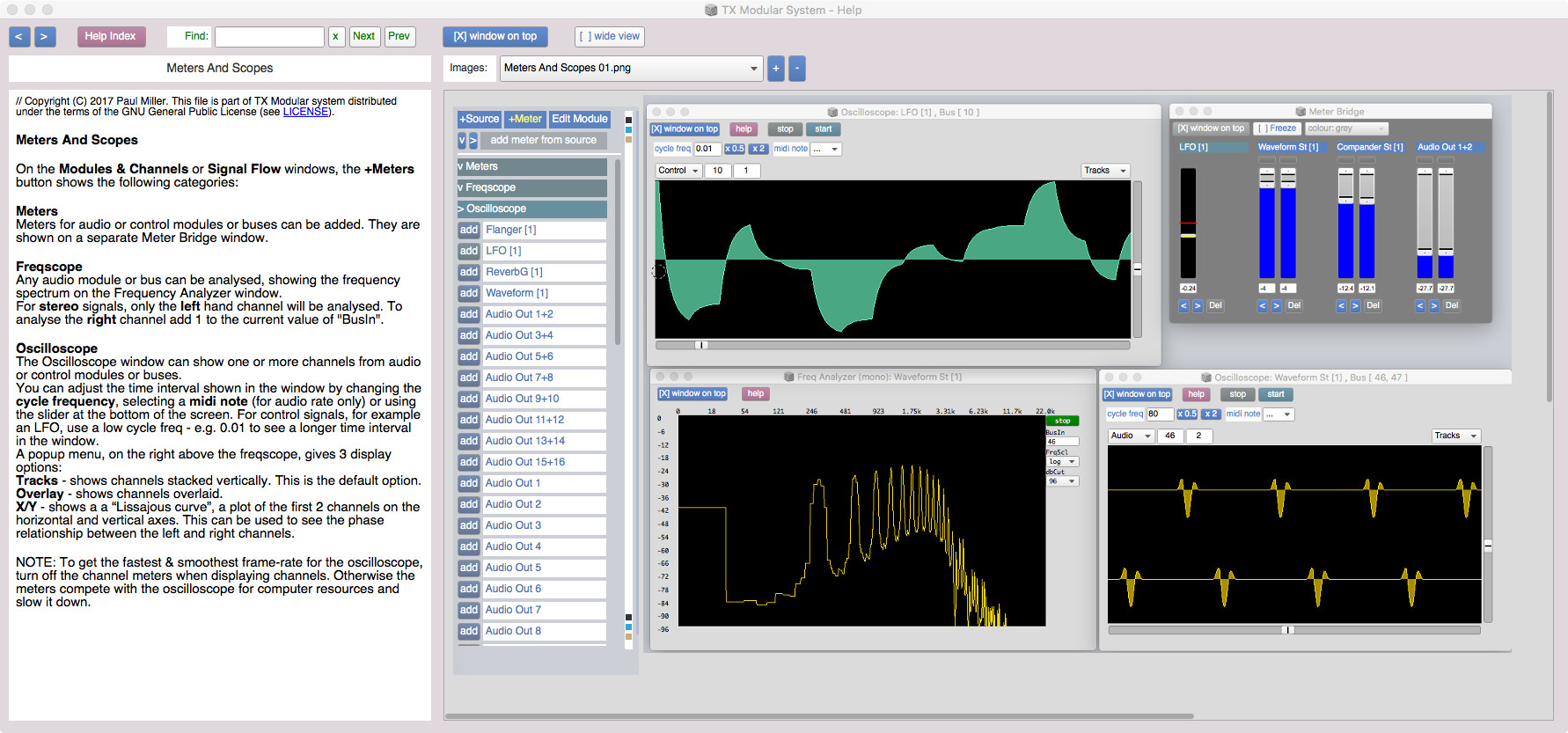

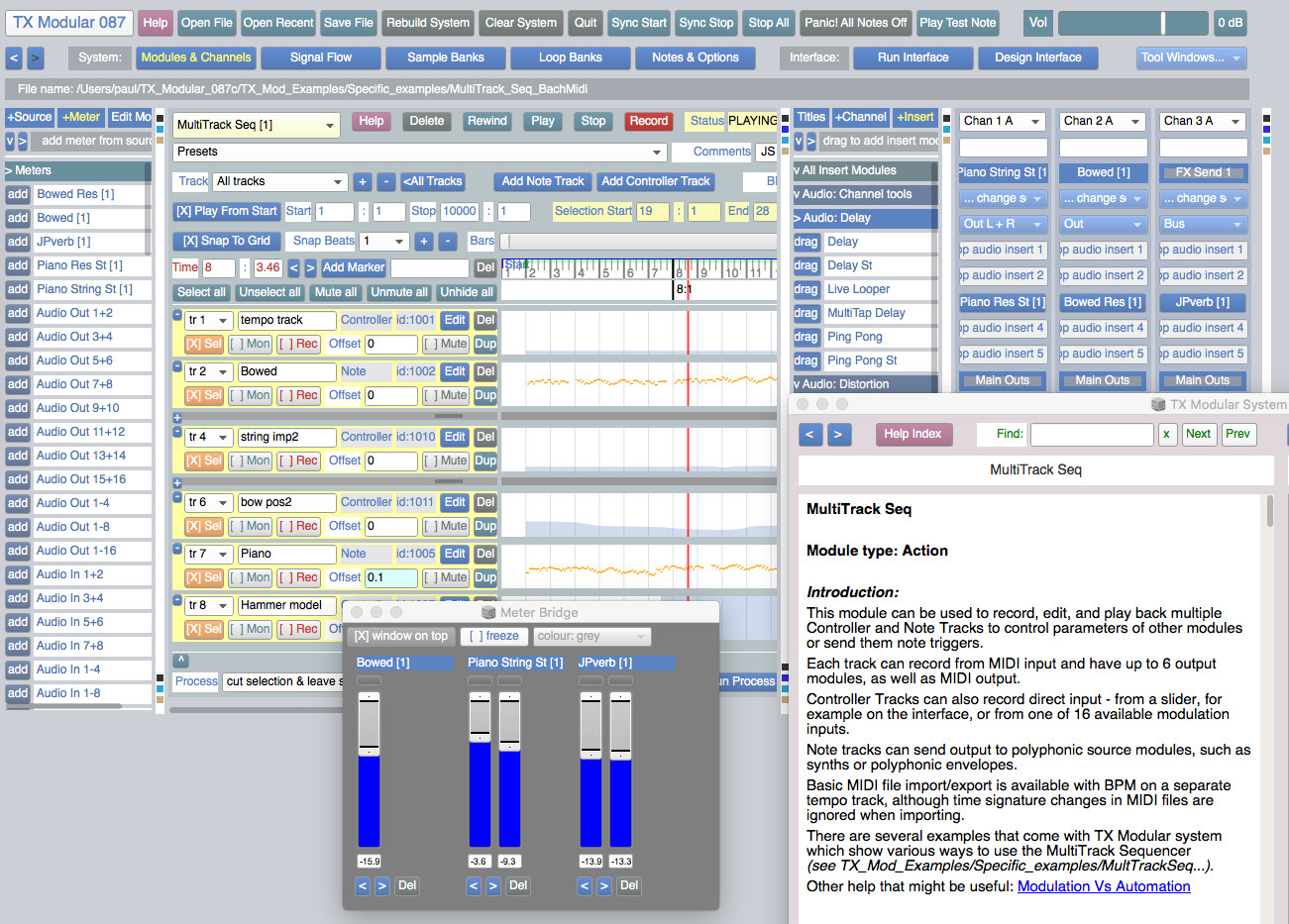

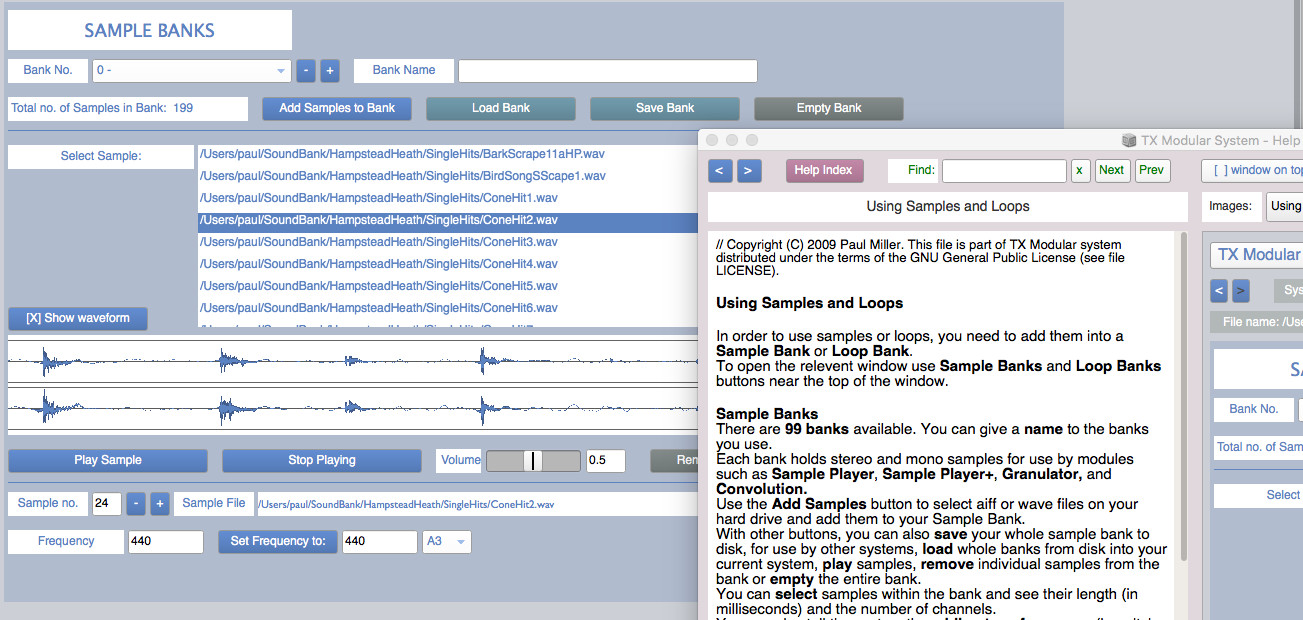

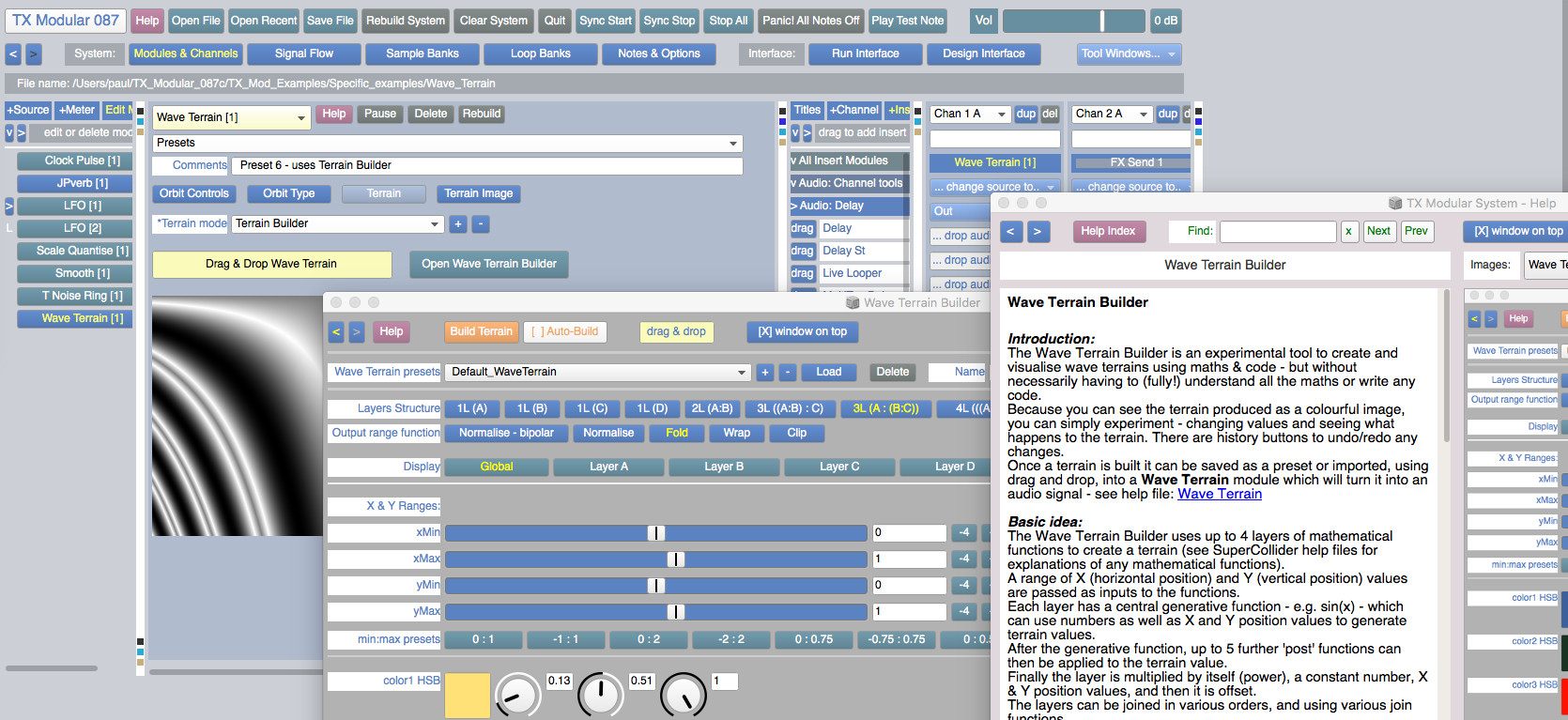

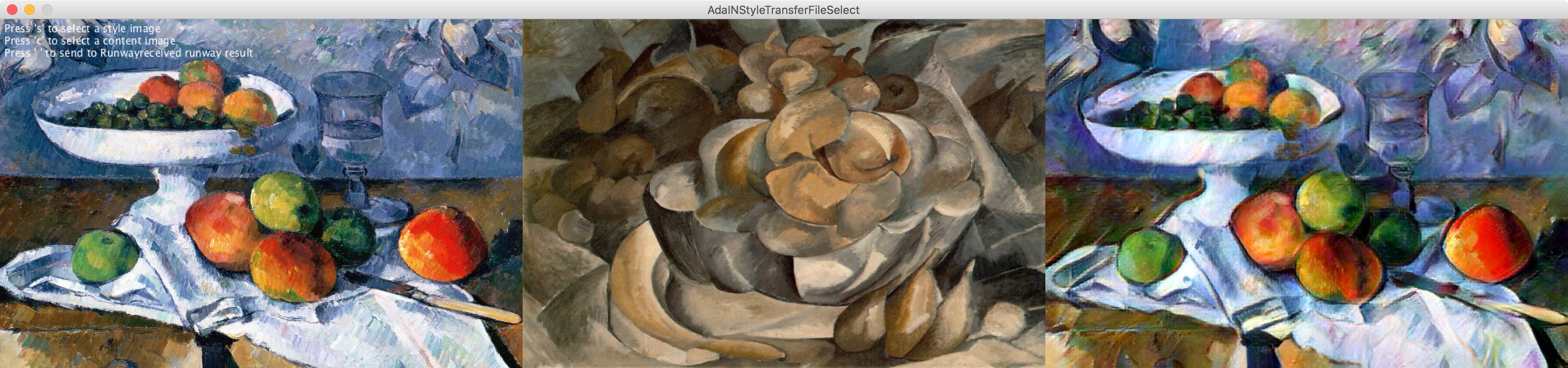

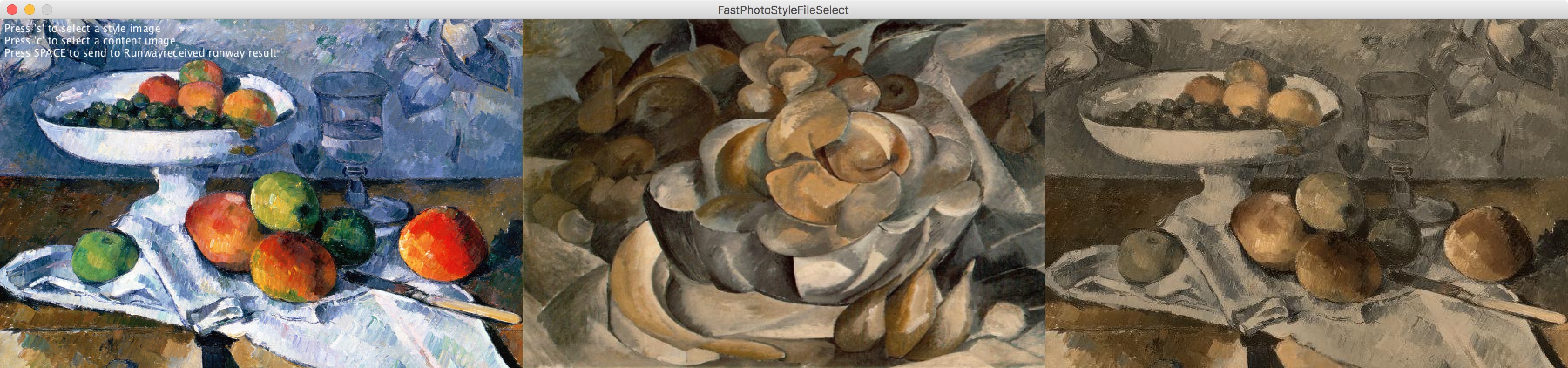

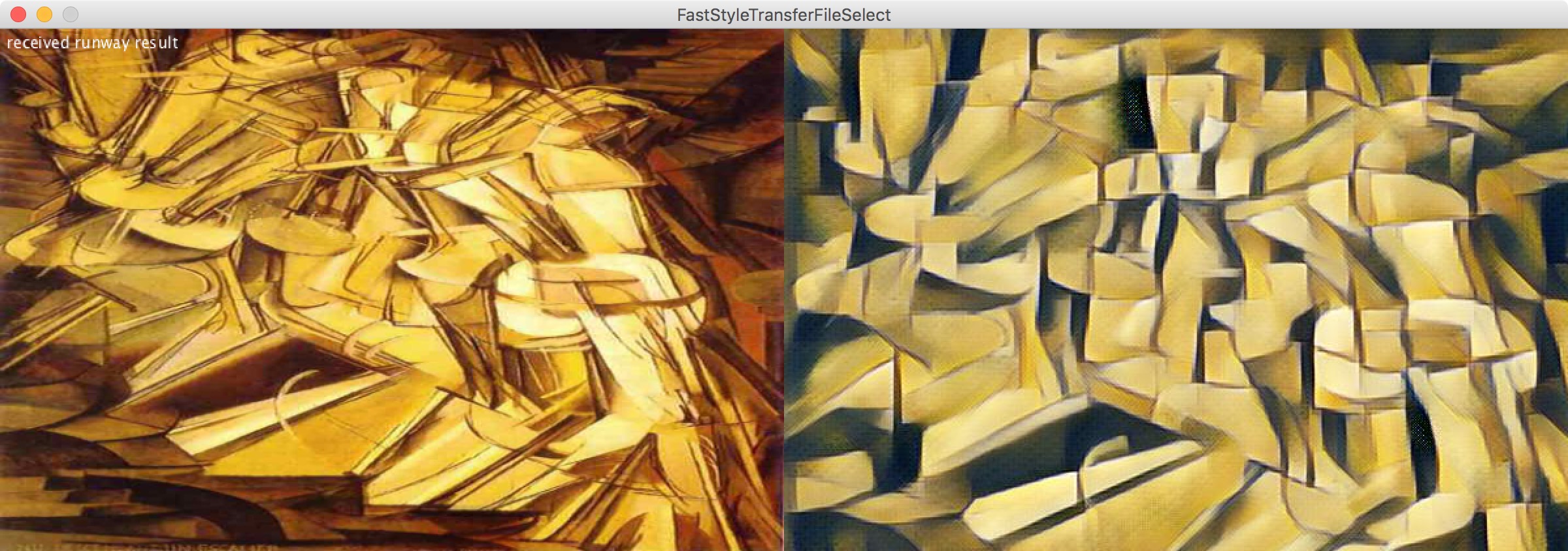

The TX Modular System is open source audio-visual software for modular synthesis and video generation, built with SuperCollider (https://supercollider.github.io) and openFrameworks (https://openFrameworks.cc).

It can be used to build interactive audio-visual systems such as: digital musical instruments, interactive generative compositions with real-time visuals, sound design tools, & live audio-visual processing tools.

This version has been tested on MacOS (0.10.11) and Windows (10). The audio engine should also work on Linux.

The visual engine, TXV, has only been built so far for MacOS and Windows - it is untested on Linux.

The current TXV MacOS build will only work with Mojave (10.14) or earlier (10.11, 10.12 & 10.13) - but NOT Catalina (10.15) or later.

You don't need to know how to program to use this system. But if you can program in SuperCollider, some modules allow you to edit the SuperCollider code inside - to generate or process audio, add modulation, create animations, or run SuperCollider Patterns.

This is a list of smaller tools that might be useful in building your game/website/interactive project. Although I’ve mostly also included ‘standards’, this list has a focus on artful tools & toys that are as fun to use as they are functional.

The goal of this list is to enable making entirely outside of closed production ecosystems or walled software gardens.

This is an interactive editor for making face filters with WebGL.

The language below is called GLSL, you can edit it to change the effect.

Libre and modular OSC / MIDI controller :

https://github.com/jean-emmanuel/open-stage-control/releases

Systems music is an idea that explores the following question: What if we could, instead of making music, design systems that generate music for us?

This idea has animated artists and composers for a long time and emerges in new forms whenever new technologies are adopted in music-making.

In the 1960s and 70s there was a particularly fruitful period. People like Steve Reich, Terry Riley, Pauline Oliveros, and Brian Eno designed systems that resulted in many landmark works of minimal and ambient music. They worked with the cutting edge technologies of the time: Magnetic tape recorders, loops, and delays.

Today our technological opportunities for making systems music are broader than ever. Thanks to computers and software, they're virtually endless. But to me, there is one platform that's particularly exciting from this perspective: Web Audio. Here we have a technology that combines audio synthesis and processing capabilities with a general purpose programming language: JavaScript. It is a platform that's available everywhere — or at least we're getting there. If we make a musical system for Web Audio, any computer or smartphone in the world can run it.

With Web Audio we can do something Reich, Riley, Oliveros, and Eno could not do all those decades ago: They could only share some of the output of their systems by recording them. We can share the system itself. Thanks to the unique power of the web platform, all we need to do is send a URL.

In this guide we'll explore some of the history of systems music and the possibilities of making musical systems with Web Audio and JavaScript. We'll pay homage to three seminal systems pieces by examining and attempting to recreate them: "It's Gonna Rain" by Steve Reich, "Discreet Music" by Brian Eno, and "Ambient 1: Music for Airports", also by Brian Eno.

Table of Contents

"Is This for Me?"

How to Read This Guide

The Tools You'll Need

Steve Reich - It's Gonna Rain (1965)

Setting Up itsgonnarain.js

Loading A Sound File

Playing The Sound

Looping The Sound

How Phase Music Works

Setting up The Second Loop

Adding Stereo Panning

Putting It Together: Adding Phase Shifting

Exploring Variations on It's Gonna Rain

Brian Eno - Ambient 1: Music for Airports, 2/1 (1978)

The Notes and Intervals in Music for Airports

Setting up musicforairports.js

Obtaining Samples to Play

Building a Simple Sampler

A System of Loops

Playing Extended Loops

Adding Reverb

Putting It Together: Launching the Loops

Exploring Variations on Music for Airports

Brian Eno - Discreet Music (1975)

Setting up discreetmusic.js

Synthesizing the Sound Inputs

Setting up a Monophonic Synth with a Sawtooth Wave

Filtering the Wave

Tweaking the Amplitude Envelope

Bringing in a Second Oscillator

Emulating Tape Wow with Vibrato

Understanding Timing in Tone.js

Transport Time

Musical Timing

Sequencing the Synth Loops

Adding Echo

Adding Tape Delay with Frippertronics

Controlling Timbre with a Graphic Equalizer

Setting up the Equalizer Filters

Building the Equalizer Control UI

Going Forward

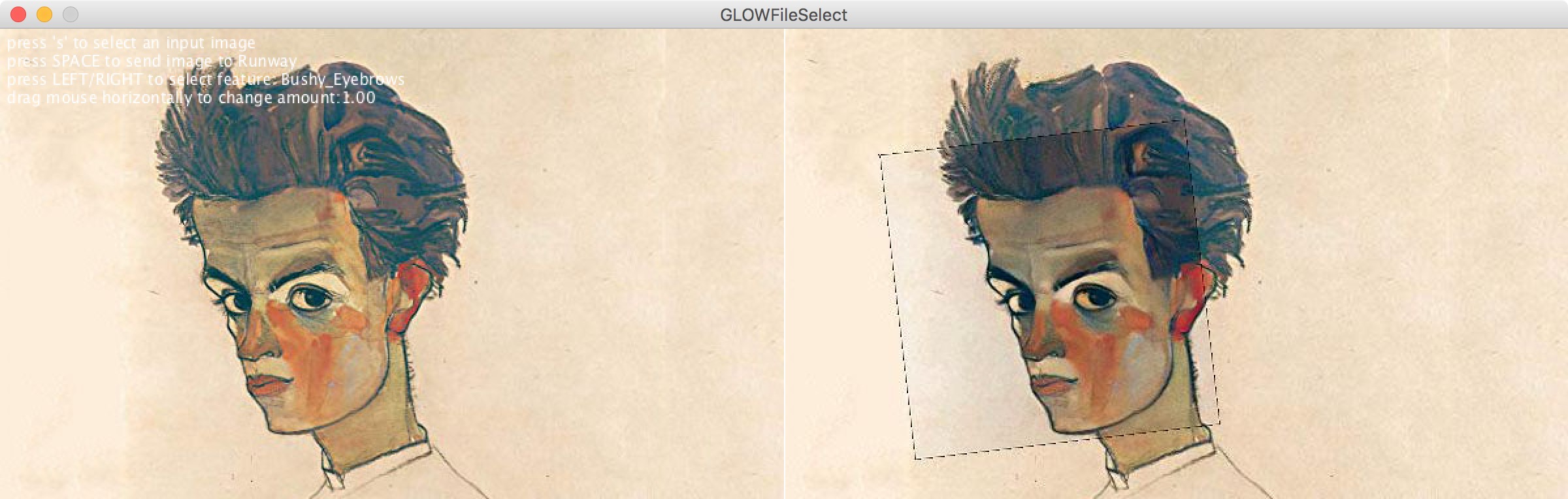

Utility library to easily connect to RunwayML from Processing

Feel free to replace this paragraph with a description of the Library.

Contributed Libraries are developed, documented, and maintained by members of the Processing community. Further directions are included with each Library. For feedback and support, please post to the Discourse. We strongly encourage all Libraries to be open source, but not all of them are.

https://github.com/runwayml/processing-library

Installation

Download https://github.com/runwayml/processing-library/releases/download/latest/RunwayML.zip

Unzip into Documents > Processing > libraries

Restart Processing (if it was already running)

A light Rust API for Multiresolution Stochastic Texture Synthesis [1], a non-parametric example-based algorithm for image generation.

![]()

Pixel-art scaling algorithms are graphical filters that are often used in video game emulators to enhance hand-drawn 2D pixel art graphics. The re-scaling of pixel art is a specialist sub-field of image rescaling.

As pixel-art graphics are usually in very low resolutions, they rely on careful placing of individual pixels, often with a limited palette of colors. This results in graphics that rely on a high amount of stylized visual cues to define complex shapes with very little resolution, down to individual pixels. This makes image scaling of pixel art a particularly difficult problem.

A number of specialized algorithms[1] have been developed to handle pixel-art graphics, as the traditional scaling algorithms do not take such perceptual cues into account.

Since a typical application of this technology is improving the appearance of fourth-generation and earlier video games on arcade and console emulators, many are designed to run in real time for sufficiently small input images at 60 frames per second. This places constraints on the type of programming techniques that can be used for this sort of real-time processing. Many work only on specific scale factors: 2× is the most common, with 3×, 4×, 5× and 6× also present.

Plugin for GIMP : https://github.com/bbbbbr/gimp-plugin-pixel-art-scalers

Waifu2x

https://en.wikipedia.org/wiki/Waifu2x

https://github.com/lltcggie/waifu2x-caffe/releases

https://github.com/imPRAGMA/W2XKit

https://old.reddit.com/r/WaifuUpscales/new/

https://github.com/BlueCocoa/waifu2x-ncnn-vulkan-macos/releases

https://old.reddit.com/r/Dandere2x/

https://old.reddit.com/r/waifu2x

https://github.com/AaronFeng753/Waifu2x-Extension

https://github.com/K4YT3X/video2x

https://old.reddit.com/r/AnimeResearch

Quote from a reddit comment :

A short list, ordered after output quality and setup time:

SRGAN, Super-resolution generative adversarial network : https://github.com/topics/srgan,

Other implementations: https://github.com/tensorlayer/srgan

https://github.com/brade31919/SRGAN-tensorflow

https://github.com/titu1994/Super-Resolution-using-Generative-Adversarial-Networks

Neural Enhance: https://github.com/alexjc/neural-enhance/

Photoshop: The newest PS version (19.x, since October 2017 release) also has a new upscaling method, called "Preserve Details 2.0 Upscale" but compared to SRGAN the results clearly lack sharp and fine details. You have asked for an App and PS is easy to use and can be automated.

Overview of the most popular algorithms:

https://github.com/IvoryCandy/super-resolution

(VDSR, EDSR, DCRN, SubPixelCNN, SRCNN, FSRCNN, SRGAN)

Not in the list above:

LapSRN: https://github.com/phoenix104104/LapSRN

SelfExSR: https://github.com/jbhuang0604/SelfExSR

RAISR, developed by Google:

https://github.com/MKFMIKU/RAISR

https://github.com/movehand/raisr

Evoboxx is a synthesizer based on the cellular automaton Game of Life, created by mathematician John Horton Conway in 1970. The game is a zero-player game, meaning that its evolution is determined by its initial state, requiring no further input. One interacts with the Game of Life by creating an initial configuration and observing how it evolves, or, for advanced players, by creating patterns with particular properties.

Mosaic is an open source multi-platform (osx, linux, windows) live coding and visual programming application, based on openFrameworks.

This project deals with the idea of integrate/amplify human-machine communication, offering a real-time flowchart based visual interface for high level creative coding. As live-coding scripting languages offer a high level coding environment, ofxVisualProgramming and the Mosaic Project as his parent layer container, aim at a high level visual-programming environment, with embedded multi scripting languages availability (Lua, GLSL, Python and BASH(macOS & linux) ).

As this project is based on openFrameworks, one of the goals is to offer as more objects as possible, using the pre-defined OF classes for trans-media manipulation (audio, text, image, video, electronics, computer vision), plus all the gigantic ofxaddons ecosystem actually available (machine learning, protocols, web, hardware interface, among a lot more).

While the described characteristics could potentially offer an extremely high complex result (OF and OFXADDONS ecosystem is really huge, and the possibility of multiple scripting languages could lead every unexperienced user to confusion), the idea behind the interface design aim at avoiding the "high complex" situation, embodying a direct and natural drag&drop connect/disconnet interface (mouse/trackpad) on the most basic level of interaction, adding text editing (keyboard) on a intermediate level of interaction (script editing), following most advanced level of interaction for experienced users (external devices communication, automated interaction, etc...)

Beats is a command-line drum machine. Feed it a song notated in YAML, and it will produce a precision-milled Wave file of impeccable timing and feel.

http://beatsdrummachine.com/tutorial/

http://tropone.de/2019/02/21/ungewoehnliche-wege-rhythmen-zu-programmieren-teil-2-beats-cl/

Each letter of the alphabet is an operation, lowercase letters operate on bang, uppercase letters operate each frame. Orca is designed to control other applications, create procedural sequencers, and to experiment with livecoding. See the documentation and installation instructions here, or have a look at a tutorial video.

A add: Outputs the sum of inputs.B bool: Bangs if input is not empty, or 0.C clock: Outputs a constant value based on the runtime frame.D delay: Bangs on a fraction of the runtime frame.E east: Moves eastward, or bangs.F if: Bangs if both inputs are equal.G generator: Writes distant operators with offset.H halt: Stops southward operators from operating.I increment: Increments southward operator.J jumper: Outputs the northward operator.K konkat: Outputs multiple variables.L loop: Loops a number of eastward operators.M modulo: Outputs the modulo of input.N north: Moves Northward, or bangs.O offset: Reads a distant operator with offset.P push: Writes an eastward operator with offset.Q query: Reads distant operators with offset.R random: Outputs a random value.S south: Moves southward, or bangs.T track: Reads an eastward operator with offset.U uturn: Reverses movement of inputs.V variable: Reads and write globally available variables.W west: Moves westward, or bangs.X teleport: Writes a distant operator with offset.Y jymper: Outputs the westward operator.Z zoom: Moves eastwardly, respawns west on collision.* bang: Bangs neighboring operators.# comment: Comments a line, or characters until the next hash.: midi: Sends a MIDI note.^ cc: Sends a MIDI CC value.; udp: Sends a UDP message.= osc: Sends a OSC message.enter bang selected operator.shift+enter toggle insert/write.space toggle play/pause.> increase BPM.< decrease BPM.shift+arrowKey Expand cursor.ctrl+arrowKey Leap cursor.alt+arrowKey Move selection.ctrl+c copy selection.ctrl+x cut selection.ctrl+v paste selection.ctrl+z undo.ctrl+shift+z redo.] increase grid size vertically.[ decrease grid size vertically.} increase grid size horizontally.{ decrease grid size horizontally.ctrl/meta+] increase program size vertically.ctrl/meta+[ decrease program size vertically.ctrl/meta+} increase program size horizontally.ctrl/meta+{ decrease program size horizontally.ctrl+= Zoom In.ctrl+- Zoom Out.ctrl+0 Zoom Reset.tab Toggle interface.backquote Toggle background.Download the app here : https://hundredrabbits.itch.io/orca

Source code : https://github.com/hundredrabbits/Orca

Video tutorial : https://www.youtube.com/watch?v=RaI_TuISSJE

To test midi on Macosx : http://notahat.com/simplesynth

Activate the virtual Midi input on Macosx : https://help.ableton.com/hc/en-us/articles/209774225-Using-virtual-MIDI-buses

Pilot (another way to create music with orca from the same creators) :

Download the app here : https://hundredrabbits.itch.io/pilot

Source code : https://github.com/hundredrabbits/Pilot

A good explanation of the software in German : http://tropone.de/2019/03/13/orca-ein-sequenzer-der-kryptischer-nicht-aussehen-kann-und-ein-versuch-einer-anleitung/

Vuo is a kit for making a million different projects — apps, videos, prototypes, plugins, exhibits, live performance effects, and more. Even if you don't have programming experience, Vuo lets you build your own stuff for Mac.

Vuo is the Finnish word for flow, and that's what Vuo is about — supporting your creative flow. When you're creating, you want to focus on your ideas. You don't want to be distracted or frustrated trying to figure out how your tools work. Vuo helps you stay in the groove by making it easy to find the building blocks you want, put them together, and tweak your creation until it's just the way you want it.

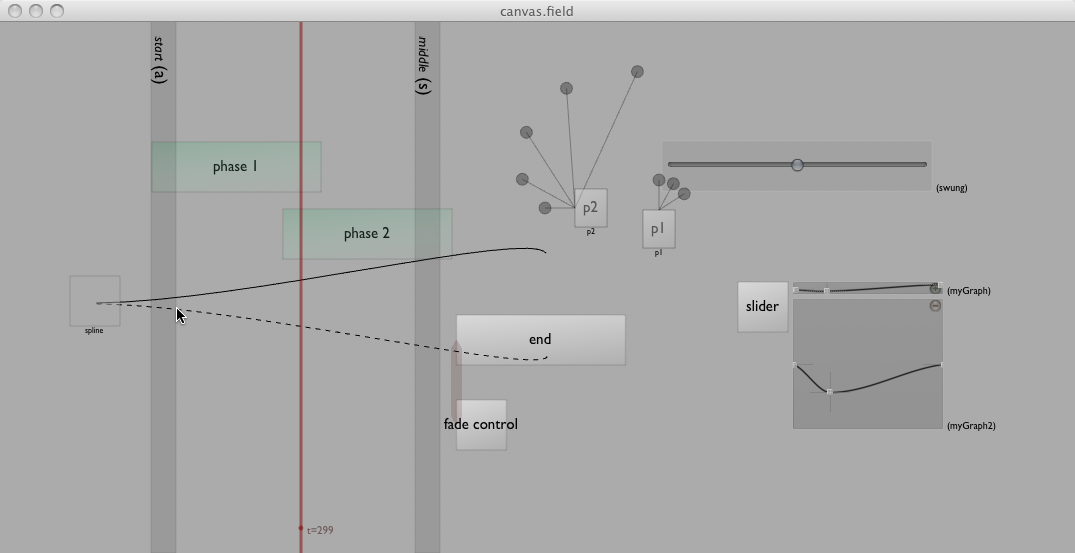

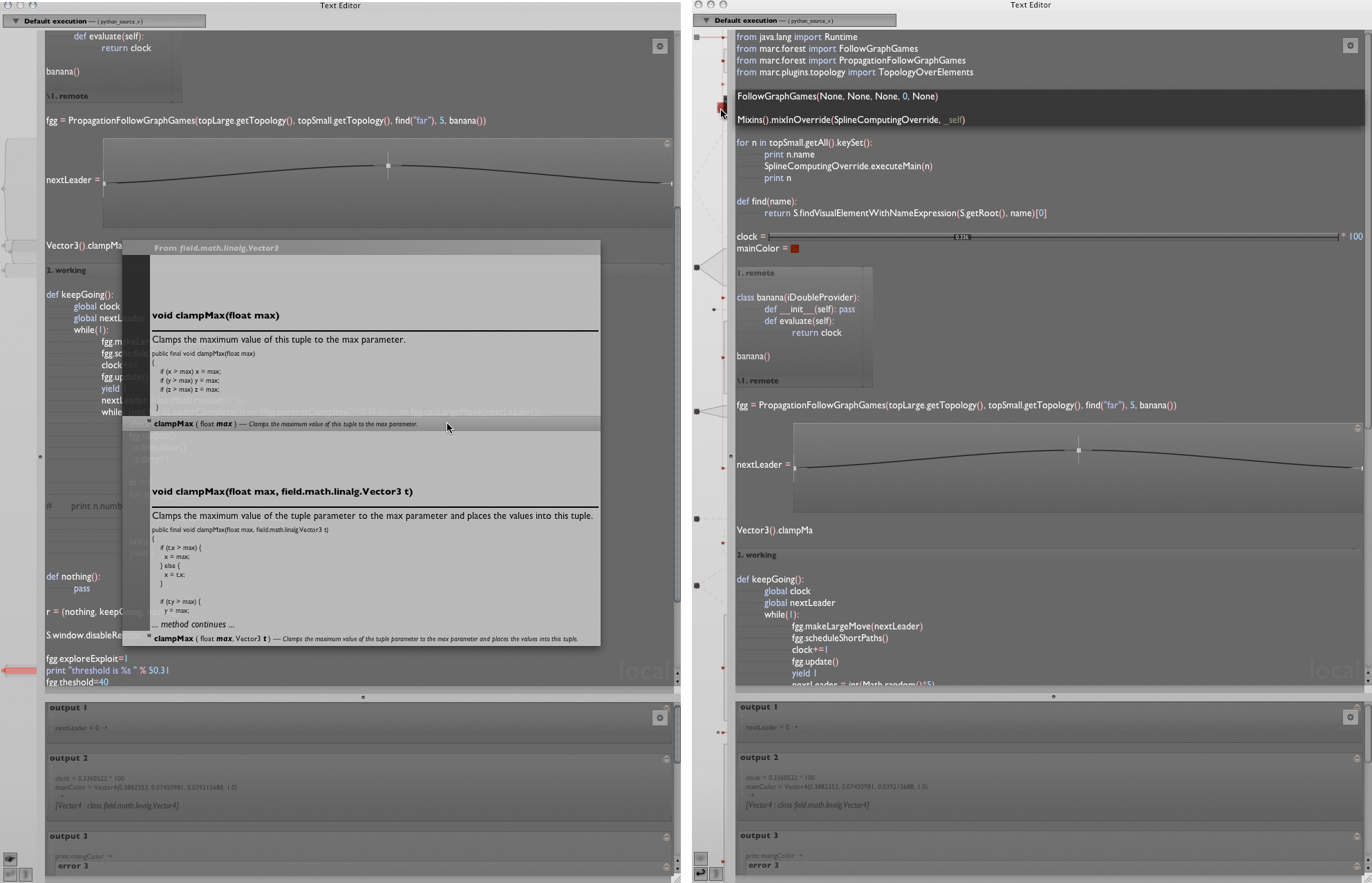

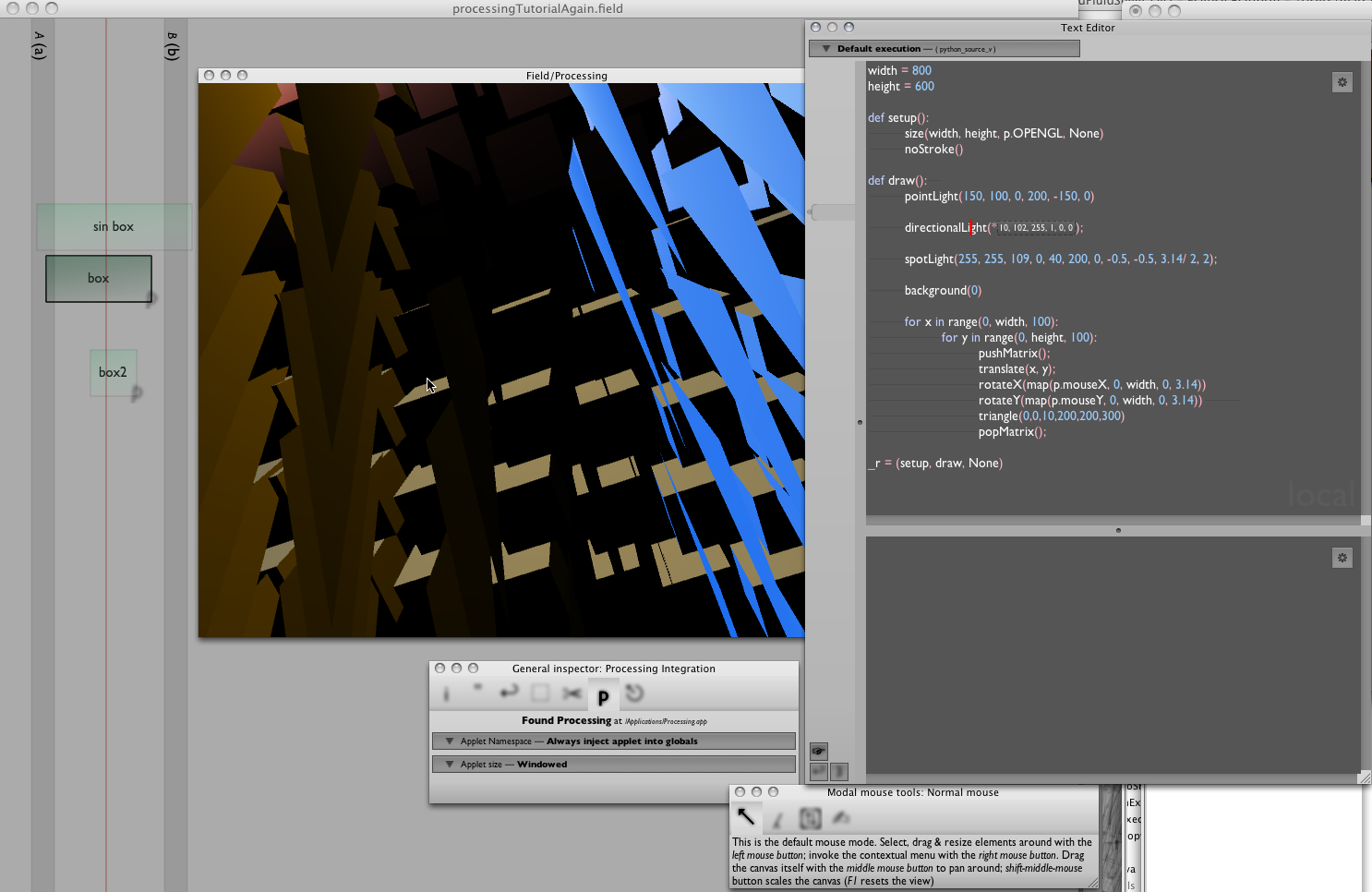

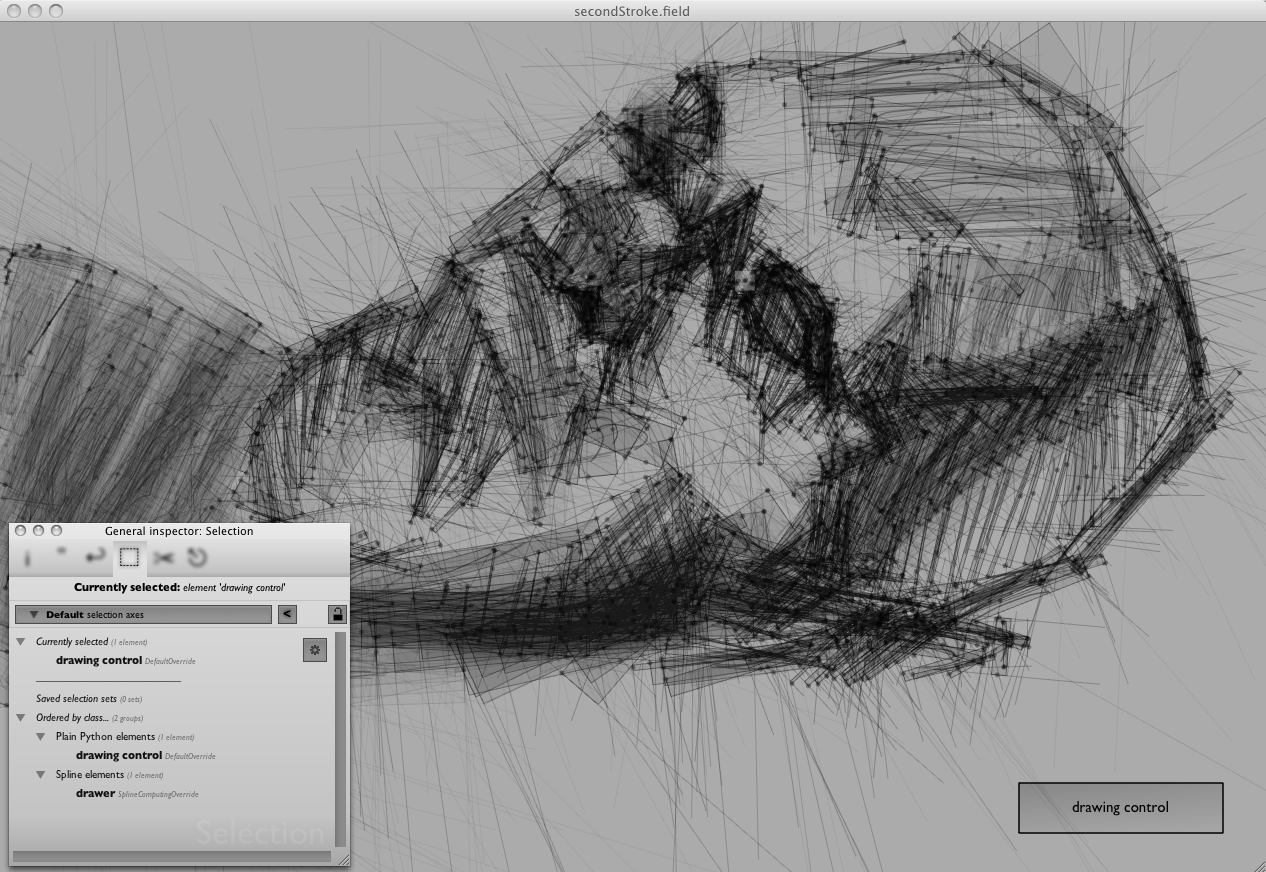

Field is a development environment for experimental code and digital art in the broadest of possible senses. While there are a great many development environments and digital art tools out there today, this one has been constructed with two key principles in mind:

Embrace and extend — rather than make a personal, private and pristine code utopia, Field tries to bridge to as many libraries, programming languages, and ways of doing things as possible. The world doesn't necessarily need another programming language or serial port library, nor do we have to pick and choose between data-flow systems, graphical user interfaces or purely textual programming — we can have it all in the right environment and we can both leverage the work of others and take control of our own tools and methods.

Live code makes anything possible — Field tries to replace as many "features" with editable code as it can. Its programming language of choice is Python — a world class, highly respected and incredibly flexible language. As such, Field is intensely customizable, with the glue between interface objects and data modifiable inside Field itself. Field takes seriously the idea that its user — you — are a programmer / artist doing serious work and that you should be able to reconfigure your tools to suit your domain and style as closely as possible.

Sustainability practitioners have long relied on images to display relationships in complex adaptive systems on various scales and across different domains. These images facilitate communication, learning, collaboration and evaluation as they contribute to shared understanding of systemic processes. This research addresses the need for images that are widely understood across different fields and sectors for researchers, policy makers, design practitioners and evaluators with varying degrees of familiarity with the complexity sciences. The research identifies, defines and illustrates 16 key features of complex systems and contributes to an evolving visual language of complexity. Ultimately the work supports learning as a basis for informed decision-making at CECAN (Centre for the Evalutation of Complexity Across the Nexus) and other communities engaged with the analysis of complex problems.

We call them "seeds". Each seed is a machine learning example you can start playing with. Explore, learn and grow them into whatever you like.

This channel was created for anyone that is curious about audio programming, digital signal processing (dsp) and creative coding- from the very basic concepts with no previous programming knowledge all the way up to building your own software instruments and applications in C++ with frameworks like Juce and openFrameworks.

MoviePy is a Python module for video editing, which can be used for basic operations (like cuts, concatenations, title insertions), video compositing (a.k.a. non-linear editing), video processing, or to create advanced effects. It can read and write the most common video formats, including GIF.

Created by Satoshi HORII at Rhizomatiks, (centiscript) is a JavaScript based creative code environment for creating experimental graphics. Imagined as an endless exploration from one script to another, Satoshi sees (centiscript) as a tool for visual thinking. Each experiment can be shared online since it relies on JavasScript + HTML + Canvas.

This is the official on-line repository for the code from the Graphics Gems series of books (from Academic Press). This series focusses on short to medium length pieces of code which perform a wide variety of computer graphics related tasks. All code here can be used without restrictions. The code distributions here contain all known bug fixes and enhancements.

A extensive book introducing C++ and Openframeworks

A free and open-source intermedia sequencer

Enables precise and flexible scripting of interactive scenarios. Control and score any OSC-compliant software or hardware : Max/MSP, PureData, OpenFrameworks, Processing...

An open source collection of 20+ computational design tools for Clojure & Clojurescript by Karsten Schmidt.

In active development since 2012, and totalling almost 39,000 lines of code, the libraries address concepts related to many displines, from animation, generative design, data analysis / validation / visualization with SVG and WebGL, interactive installations, 2d / 3d geometry, digital fabrication, voxel modeling, rendering, linked data graphs & querying, encryption, OpenCL computing etc.

Many of the thi.ng projects (especially the larger ones) are written in a literate programming style and include extensive documentation, diagrams and tests, directly in the source code on GitHub. Each library can be used individually. All projects are licensed under the Apache Software License 2.0.

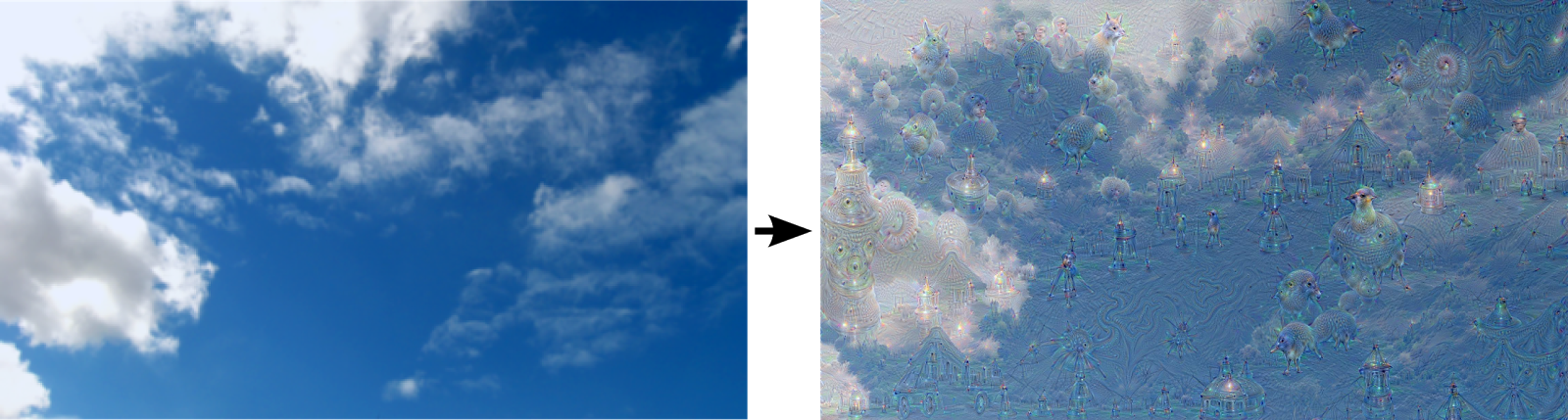

Artificial Neural Networks have spurred remarkable recent progress in image classification and speech recognition. But even though these are very useful tools based on well-known mathematical methods, we actually understand surprisingly little of why certain models work and others don’t. So let’s take a look at some simple techniques for peeking inside these networks.

We train an artificial neural network by showing it millions of training examples and gradually adjusting the network parameters until it gives the classifications we want. The network typically consists of 10-30 stacked layers of artificial neurons. Each image is fed into the input layer, which then talks to the next layer, until eventually the “output” layer is reached. The network’s “answer” comes from this final output layer.

One of the challenges of neural networks is understanding what exactly goes on at each layer. We know that after training, each layer progressively extracts higher and higher-level features of the image, until the final layer essentially makes a decision on what the image shows. For example, the first layer maybe looks for edges or corners. Intermediate layers interpret the basic features to look for overall shapes or components, like a door or a leaf. The final few layers assemble those into complete interpretations—these neurons activate in response to very complex things such as entire buildings or trees.

One way to visualize what goes on is to turn the network upside down and ask it to enhance an input image in such a way as to elicit a particular interpretation. Say you want to know what sort of image would result in “Banana.” Start with an image full of random noise, then gradually tweak the image towards what the neural net considers a banana (see related work in [1], [2], [3], [4]). By itself, that doesn’t work very well, but it does if we impose a prior constraint that the image should have similar statistics to natural images, such as neighboring pixels needing to be correlated.

Build beautiful interactive books using GitHub/Git and Markdown.

https://gist.github.com/nickloewen/10565777

This is a plain-text version of Bret Victor’s reading list.