Clip retrieval works by converting the text query to a CLIP embedding , then using that embedding to query a knn index of clip image embedddings

https://github.com/rom1504/clip-retrieval

“Although our study doesn’t present ways to mitigate negative hunger-induced emotions, research suggests that being able to label an emotion can help people to regulate it, such as by recognising that we feel angry simply because we are hungry. Therefore, greater awareness of being ‘hangry’ could reduce the likelihood that hunger results in negative emotions and behaviours in individuals.”

A book of 1000 paintings and illustrations of robots created by artificial intelligence. The author generated all of the images in this book by writing original prompts for DALL·E 2, OpenAI’s AI system that can create realistic images and art from a description in natural language. Upon generating the images, the author curated and arranged the images to their own liking and takes ultimate responsibility for the content of this publication.

https://openai.com/dall-e-2/

https://github.com/CompVis/latent-diffusion

https://huggingface.co/spaces/multimodalart/latentdiffusion

https://mirror.xyz/0x0f6712c6ac4f02f47cA8b5cf200B224aE6fD8B69/AYLAsdtM090nHWpvWQ13exkaJoNyllkhxa9ffEUPOrg

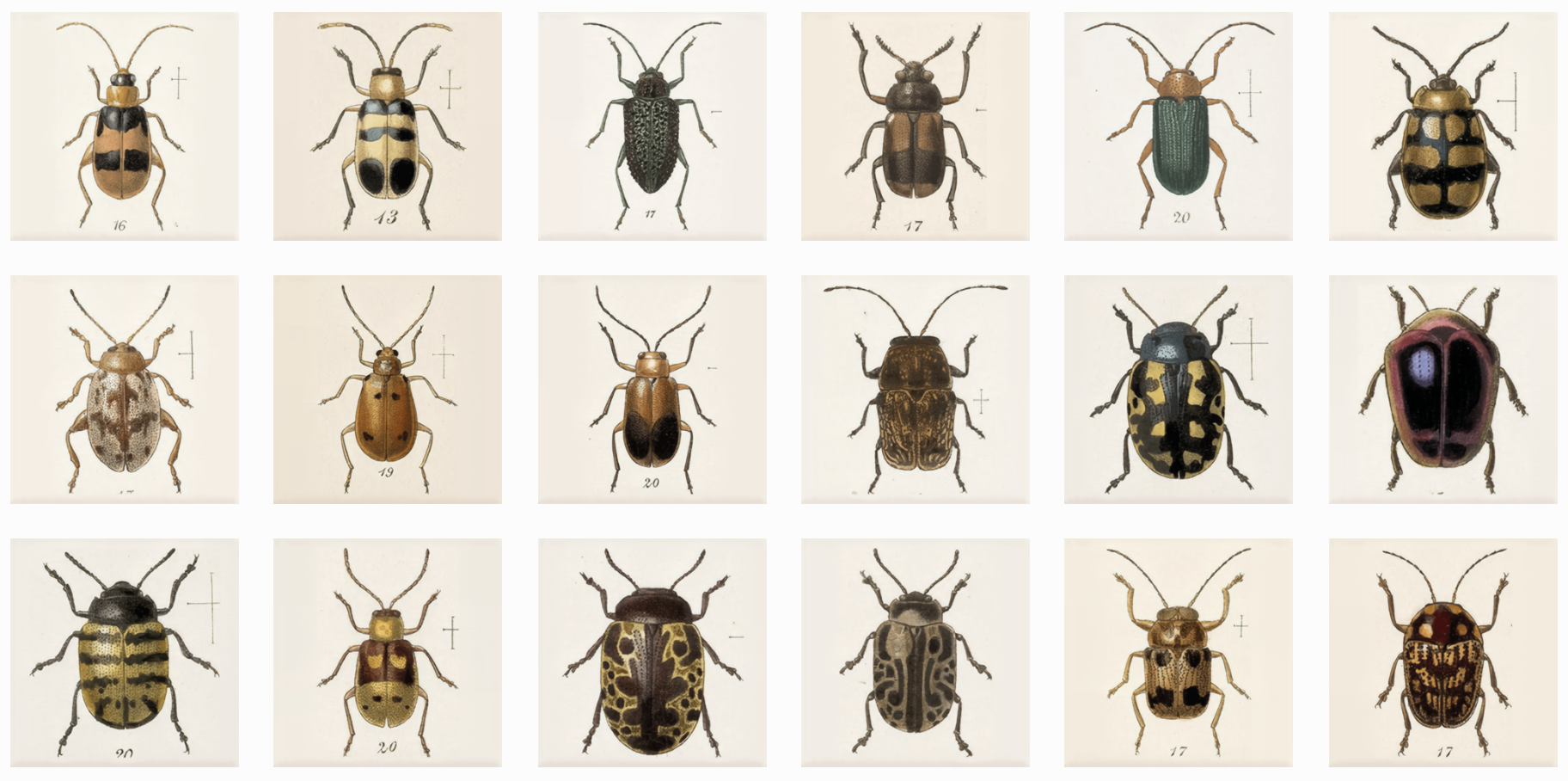

Neural Cellular Automata (NCA We use NCA to refer to both Neural Cellular Automata and Neural Cellular Automaton.) are capable of learning a diverse set of behaviours: from generating stable, regenerating, static images , to segmenting images , to learning to “self-classify” shapes . The inductive bias imposed by using cellular automata is powerful. A system of individual agents running the same learned local rule can solve surprisingly complex tasks. Moreover, individual agents, or cells, can learn to coordinate their behavior even when separated by large distances. By construction, they solve these tasks in a massively parallel and inherently degenerate Degenerate in this case refers to the biological concept of degeneracy. way. Each cell must be able to take on the role of any other cell - as a result they tend to generalize well to unseen situations.

In this work, we apply NCA to the task of texture synthesis. This task involves reproducing the general appearance of a texture template, as opposed to making pixel-perfect copies. We are going to focus on texture losses that allow for a degree of ambiguity. After training NCA models to reproduce textures, we subsequently investigate their learned behaviors and observe a few surprising effects. Starting from these investigations, we make the case that the cells learn distributed, local, algorithms.

To do this, we apply an old trick: we employ neural cellular automata as a differentiable image parameterization .

The papers summarized here are mainly from 2017 onwards.

Please refer to the Survey paper(Image Aesthetic Assessment:An Experimental Survey) before 2016.

A showcase with creative machine learning experiments

This database* is an ongoing project to aggregate tools and resources for artists, engineers, curators & researchers interested in incorporating machine learning (ML) and other forms of artificial intelligence (AI) into their practice. Resources in the database come from our partners and network; tools cover a broad spectrum of possibilities presented by the current advances in ML like enabling users to generate images from their own data, create interactive artworks, draft texts or recognise objects. Most of the tools require some coding skills, however, we’ve noted ones that don’t. Beginners are encouraged to turn to RunwayML or entries tagged as courses.

*This database isn’t comprehensive—it's a growing collection of research commissioned & collected by the Creative AI Lab. The latest tools were selected by Luba Elliott. Check back for new entries.

Via : https://docs.google.com/document/d/1TkusCE5mS4tuTYoBwaTV4aJKdSVsf9jFKsGJCx8M05c/edit

https://github.com/msieg/deep-music-visualizer

https://www.instagram.com/deep_music_visualizer/

https://www.youtube.com/watch?v=L7R-yBZ5QYc

Want to make a deep music video? Wrap your mind around BigGAN. Developed at Google by Brock et al. (2018)¹, BigGAN is a recent chapter in a brief history of generative adversarial networks (GANs). GANs are AI models trained by two competing neural networks: a generator creates new images based on statistical patterns learned from a set of example images, and a discriminator tries to classify the images as real or fake. By training the generator to fool the discriminator, GANs learn to create realistic images.

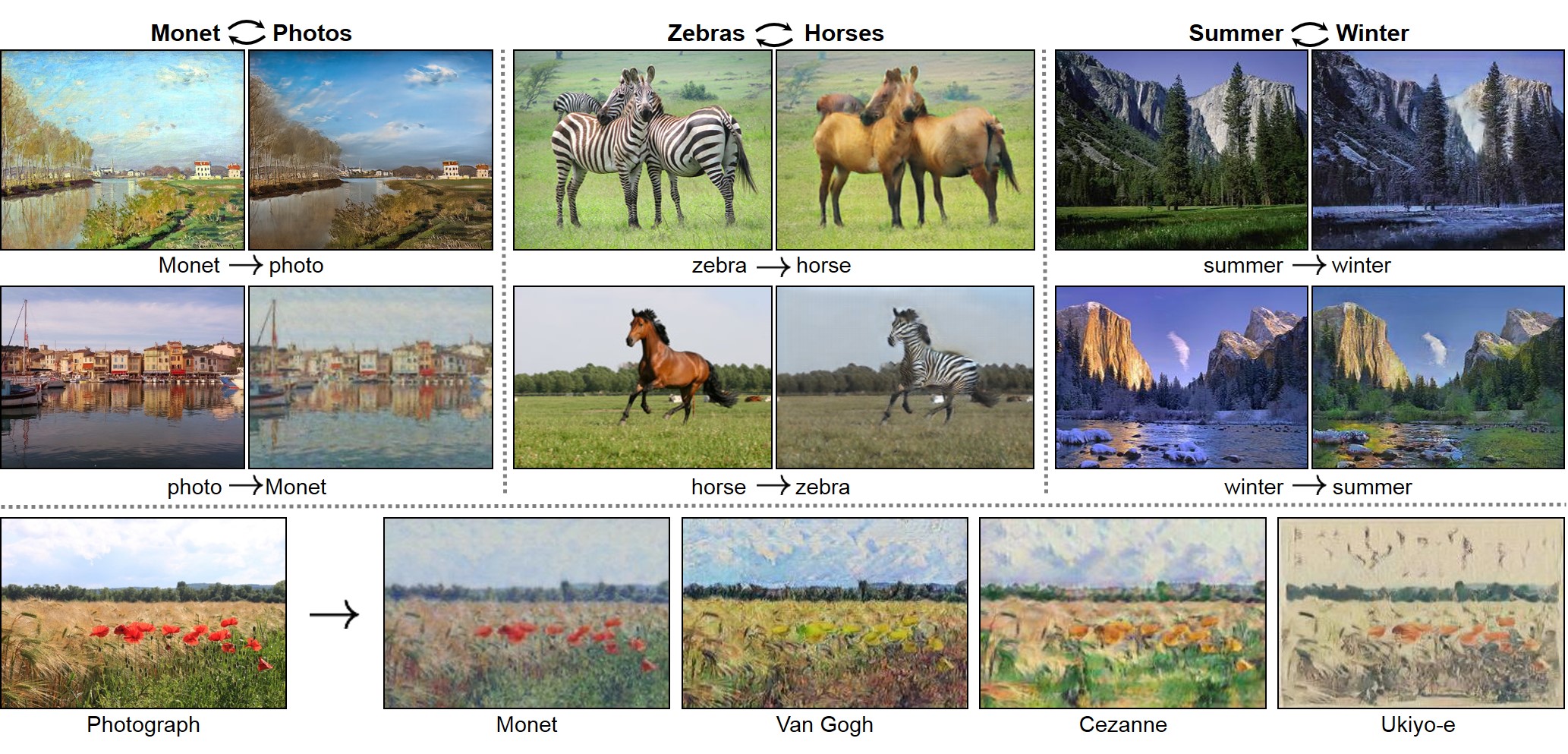

ComboGAN: Unrestrained Scalability for Image Domain Translation Asha Anoosheh, Eirikur Augustsson, Radu Timofte, Luc van Gool In Arxiv, 2017.

https://arxiv.org/pdf/1712.06909.pdf

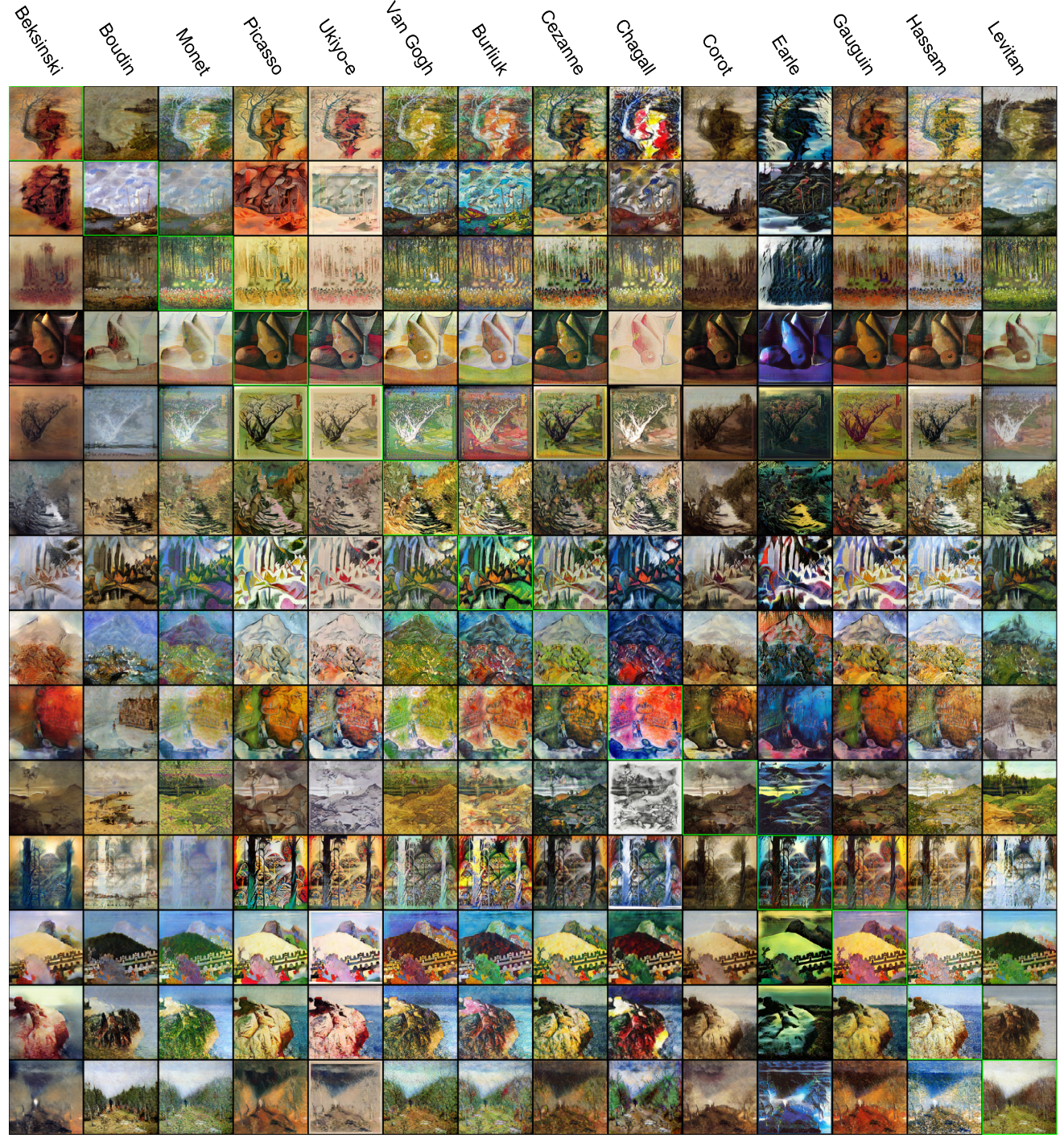

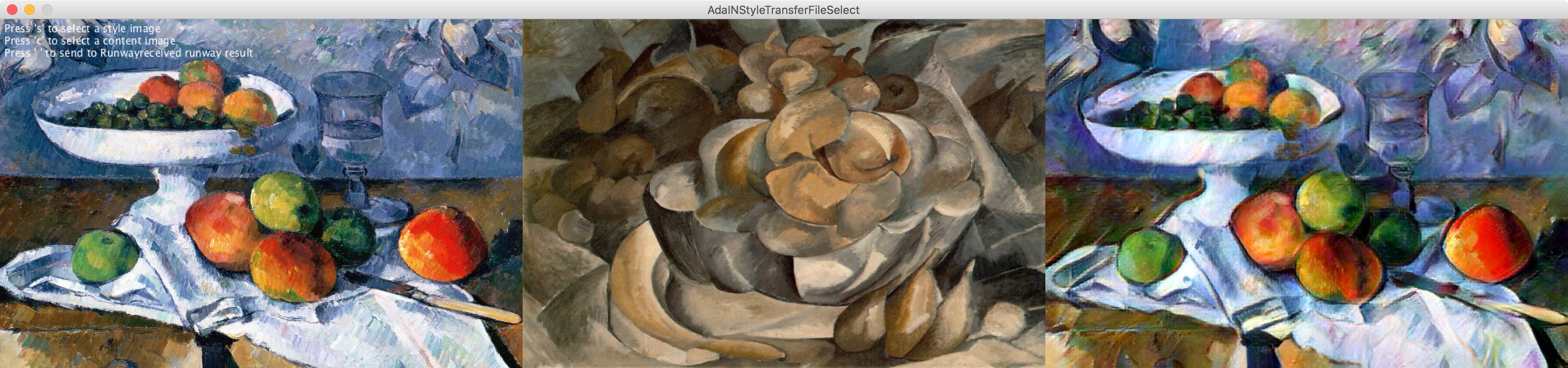

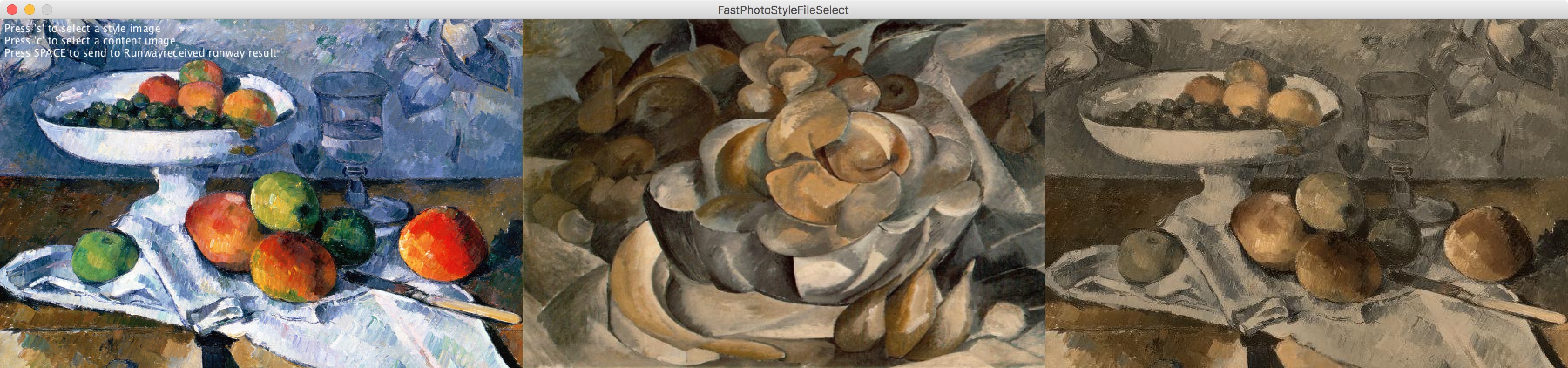

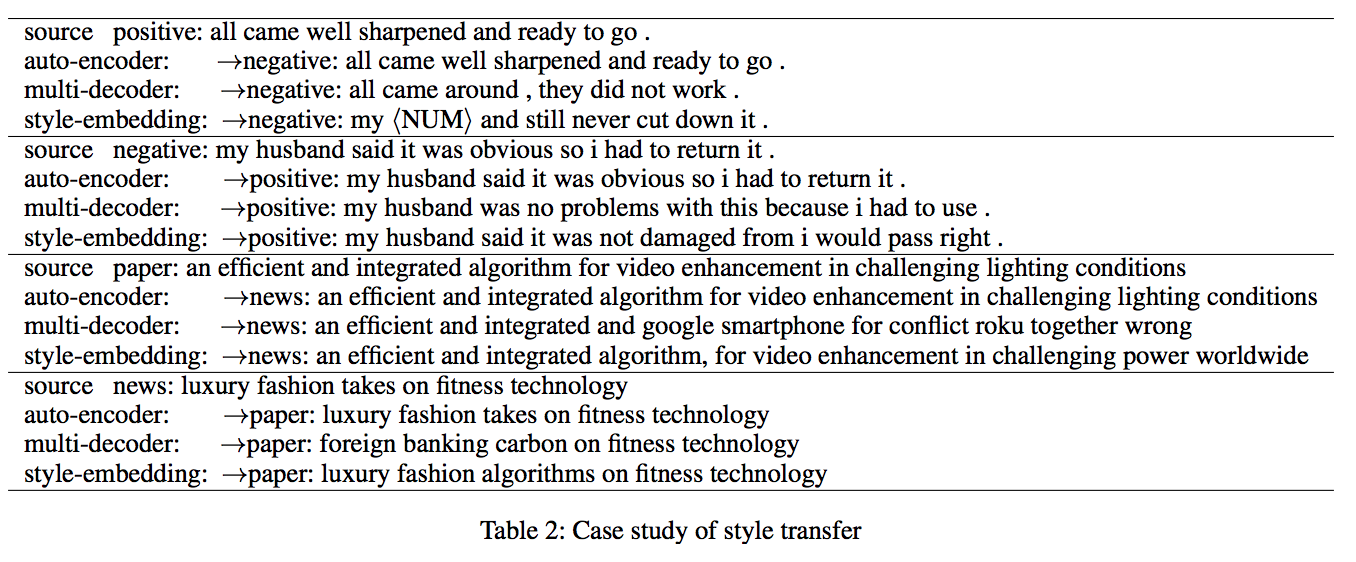

This year alone has seen unprecedented leaps in the area of learning-based image translation, namely CycleGAN, by Zhuet al. But experiments so far have been tailored to merely two domains at a time, and scaling them to more would re-quire an quadratic number of models to be trained. And with two-domain models taking days to train on current hardware,the number of domains quickly becomes limited by the time and resources required to process them. In this paper, we pro-pose a multi-component image translation model and training scheme which scales linearly - both in resource consumption and time required - with the number of domains. We demonstrate its capabilities on a dataset of paintings by 14different artists and on images of the four different seasons in the Alps. Note that 14 data groups would need(14choose2) =91 different CycleGAN models: a total of 182 genera-tor/discriminator pairs; whereas our model requires only 14generator/discriminator pairs

UNIT: UNsupervised Image-to-image Translation Networks : https://github.com/mingyuliutw/UNIT

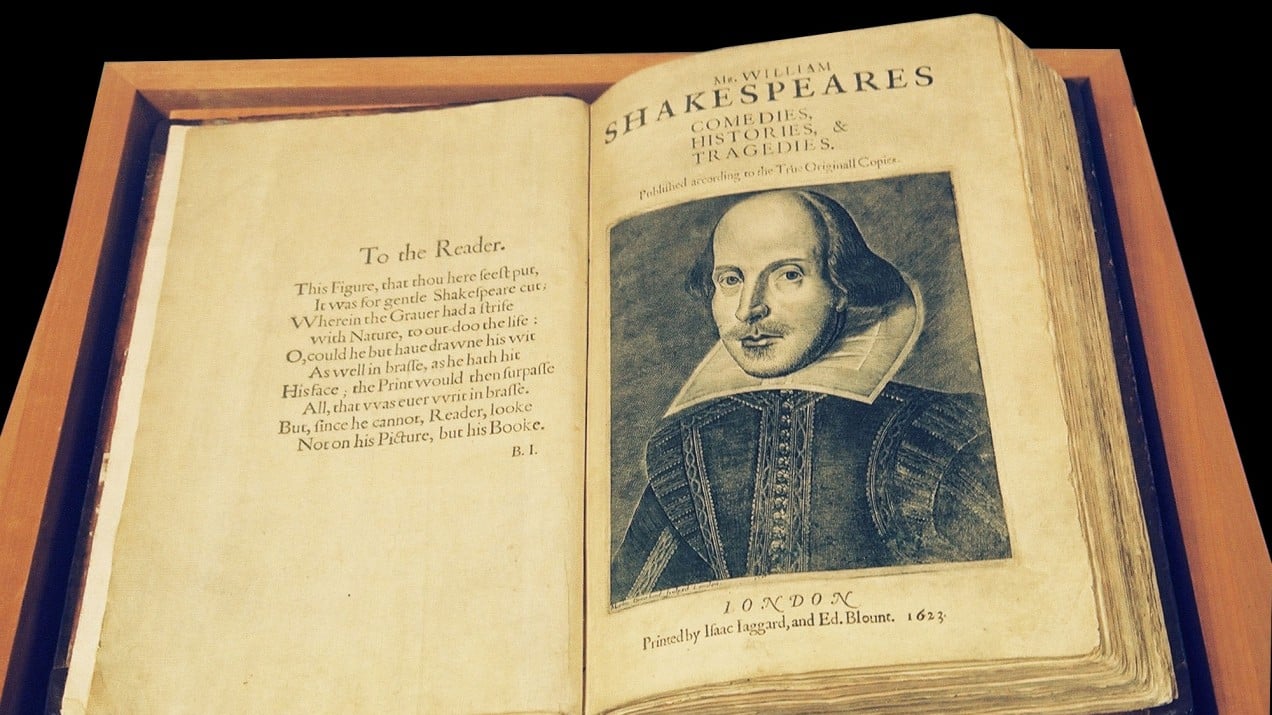

Literary analysts have long noticed the hand of another author in Shakespeare’s Henry VIII. Now a neural network has identified the specific scenes in question—and who actually wrote them.

For much of his life, William Shakespeare was the house playwright for an acting company called the King’s Men that performed his plays on the banks of the River Thames in London. When Shakespeare died in 1616, the company needed a replacement and turned to one of the most prolific and famous playwrights of the time, a man named John Fletcher.

Utility library to easily connect to RunwayML from Processing

Feel free to replace this paragraph with a description of the Library.

Contributed Libraries are developed, documented, and maintained by members of the Processing community. Further directions are included with each Library. For feedback and support, please post to the Discourse. We strongly encourage all Libraries to be open source, but not all of them are.

https://github.com/runwayml/processing-library

Installation

Download https://github.com/runwayml/processing-library/releases/download/latest/RunwayML.zip

Unzip into Documents > Processing > libraries

Restart Processing (if it was already running)

Create beautiful, wild and weird images with GAN.

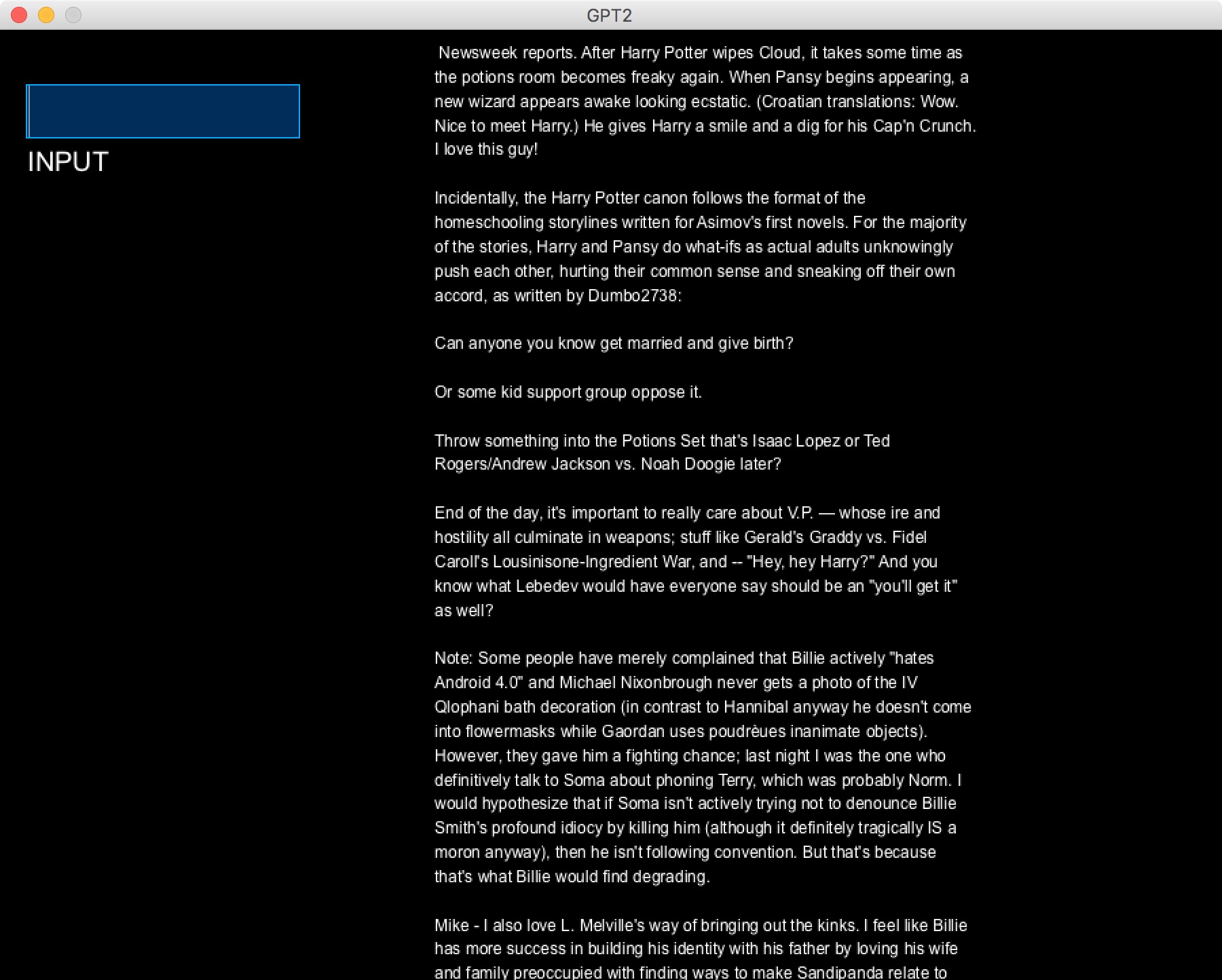

Demonstration tutorial of retraining OpenAI’s GPT-2-small (a text-generating Transformer neural network) on a large public domain Project Gutenberg poetry corpus to generate high-quality English verse.

https://jalammar.github.io/illustrated-gpt2/

Other tutorial : https://medium.com/@ngwaifoong92/beginners-guide-to-retrain-gpt-2-117m-to-generate-custom-text-content-8bb5363d8b7f

https://github.com/minimaxir/gpt-2-simple

Example : http://textsynth.org/

Datasets :

https://www.kaggle.com/datasets

https://github.com/awesomedata/awesome-public-datasets

Scrap webpage with python :

https://www.crummy.com/software/BeautifulSoup/

https://github.com/EugenHotaj/beatles/blob/master/scraper.py

https://github.com/shawwn/colab-tricks

Built by Adam King (@AdamDanielKing) as an easier way to play with OpenAI's new machine learning model. In February, OpenAI unveiled a language model called GPT-2 that generates coherent paragraphs of text one word at a time.

Declassifier

Custom Software, COCO Dataset (corrected). 2 days 5 hours 25 min. 2019

Declassifier processes pictures using the YOLO computer vision algorithm. Instead of showing the program's prediction, the picture is overlayed with images from COCO, the training dataset from which the algorithm learned in the first place.

The data by which machine learning algorithms learn to make predictions is hardly ever shown, let alone credited. By doing both, Declassifier exposes the myth of magically intelligent machines, instead applauding the photographers who made the technical achievement possible. In fact, when showing the actual training pictures, credit is not only due but mandatory.

AI research that touches on dialogue and story generation. As before, I’m picking a few points of interest, summarizing highlights, and then linking through to the detailed research.

This one is about a couple of areas of natural language processing and generation, as well as sentiment understanding, relevant to how we might realize stories and dialogue with particular surface features and characteristics.

https://ganbreeder.app/i?k=1f98015a7ce950101ec1c5ee

Ganbreeder is a collaborative art tool for discovering images. Images are 'bred' by having children, mixing with other images and being shared via their URL. This is an experiment in using breeding + sharing as methods of exploring high complexity spaces. GAN's are simply the engine enabling this. Ganbreeder is very similar to, and named after, Picbreeder. It is also inspired by an earlier project of mine Facebook Graffiti which demonstrated the creative capacity of crowds. Ganbreeder uses these BigGAN models and the source code is available.

We call them "seeds". Each seed is a machine learning example you can start playing with. Explore, learn and grow them into whatever you like.

It's all a game of construction — some with a brush, some with a shovel, some choose a pen.

Jackson Pollock

…and some, including myself, choose neural networks. I’m an artist, and I've also been building commercial software for a long while. But art and software used to be two parallel tracks in my life; save for the occasional foray into generative art with Processing and computational photography, all my art was analog… until I discovered GANs (Generative Adversarial Networks).

Since the invention of GANs in 2014, the machine learning community has produced a number of deep, technical pieces about the technique (such as this one). This is not one of those pieces. Instead, I want to share in broad strokes some reasons why GANs are excellent artistic tools and the methods I have developed for creating my GAN-augmented art.

https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

https://people.eecs.berkeley.edu/~taesung_park/CycleGAN/datasets/

https://github.com/eriklindernoren/PyTorch-GAN

https://heartbeat.fritz.ai/introduction-to-generative-adversarial-networks-gans-35ef44f21193

https://github.com/nightrome/really-awesome-gan

https://github.com/zhangqianhui/AdversarialNetsPapers

https://github.com/io99/Resources

https://github.com/yunjey/pytorch-tutorial

https://github.com/bharathgs/Awesome-pytorch-list

https://old.reddit.com/r/MachineLearning

http://www.codingwoman.com/generative-adversarial-networks-entertaining-intro/

https://medium.com/@jonathan_hui/gan-gan-series-2d279f906e7b

https://www.youtube.com/channel/UC9OeZkIwhzfv-_Cb7fCikLQ/videos

https://www.youtube.com/watch?list=PLZHQObOWTQDNU6R1_67000Dx_ZCJB-3pi&v=aircAruvnKk

https://www.youtube.com/watch?list=PLxt59R_fWVzT9bDxA76AHm3ig0Gg9S3So&v=ZzWaow1Rvho