ComboGAN: Unrestrained Scalability for Image Domain Translation Asha Anoosheh, Eirikur Augustsson, Radu Timofte, Luc van Gool In Arxiv, 2017.

https://arxiv.org/pdf/1712.06909.pdf

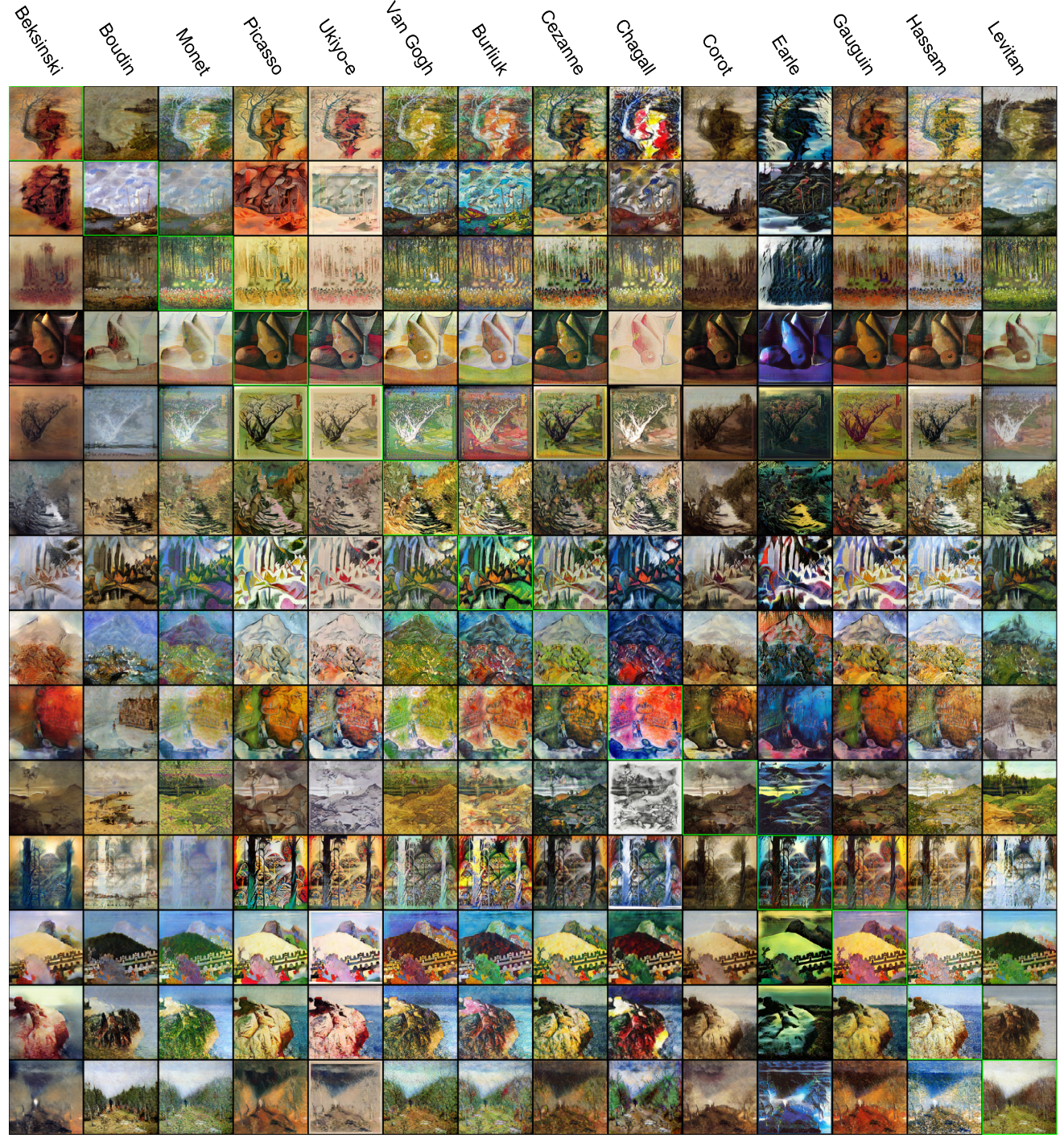

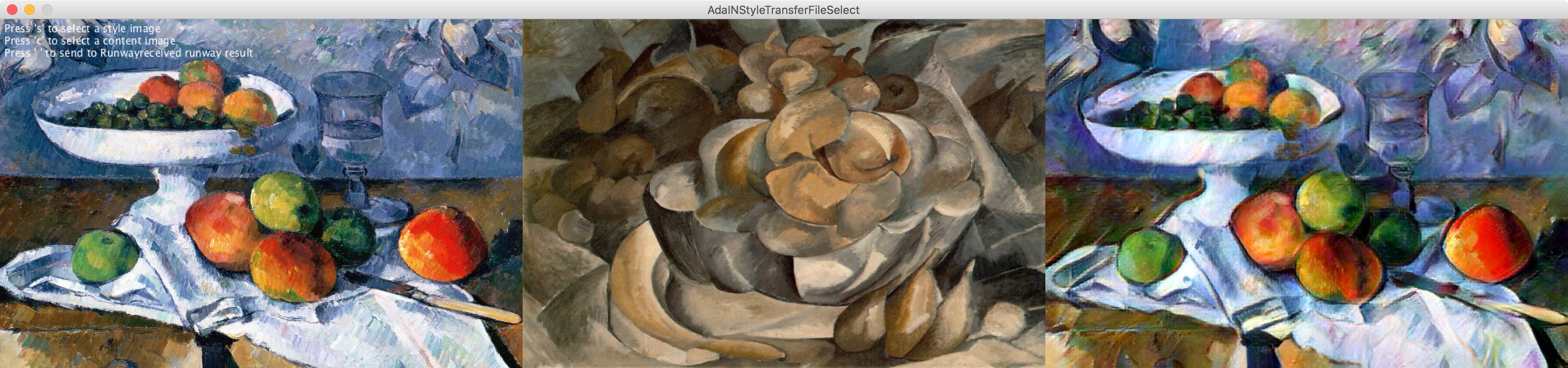

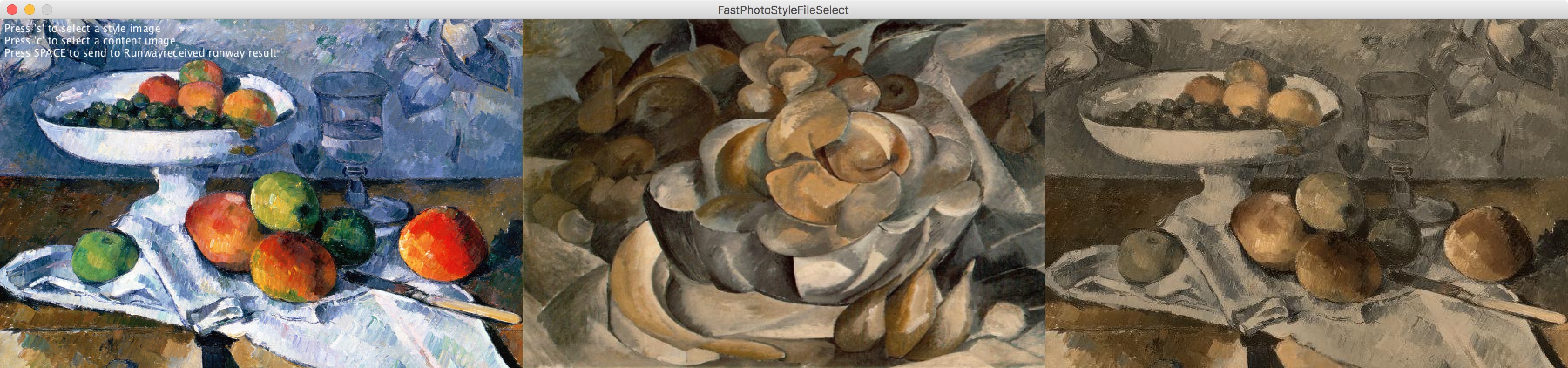

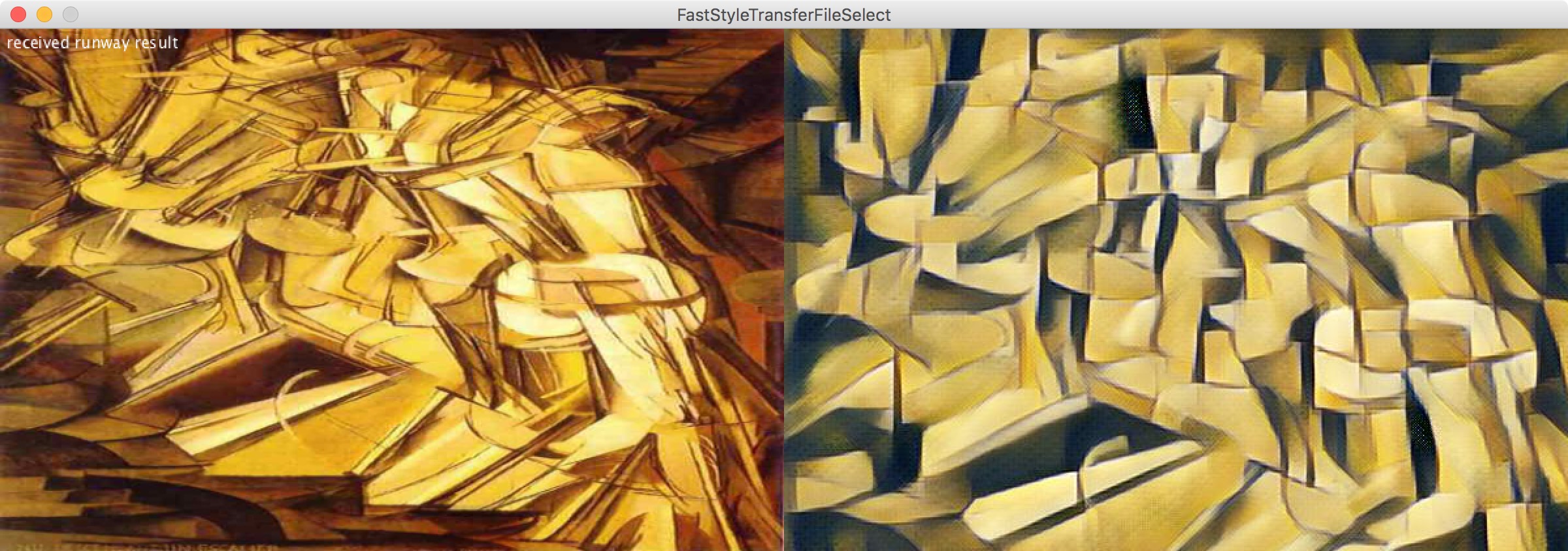

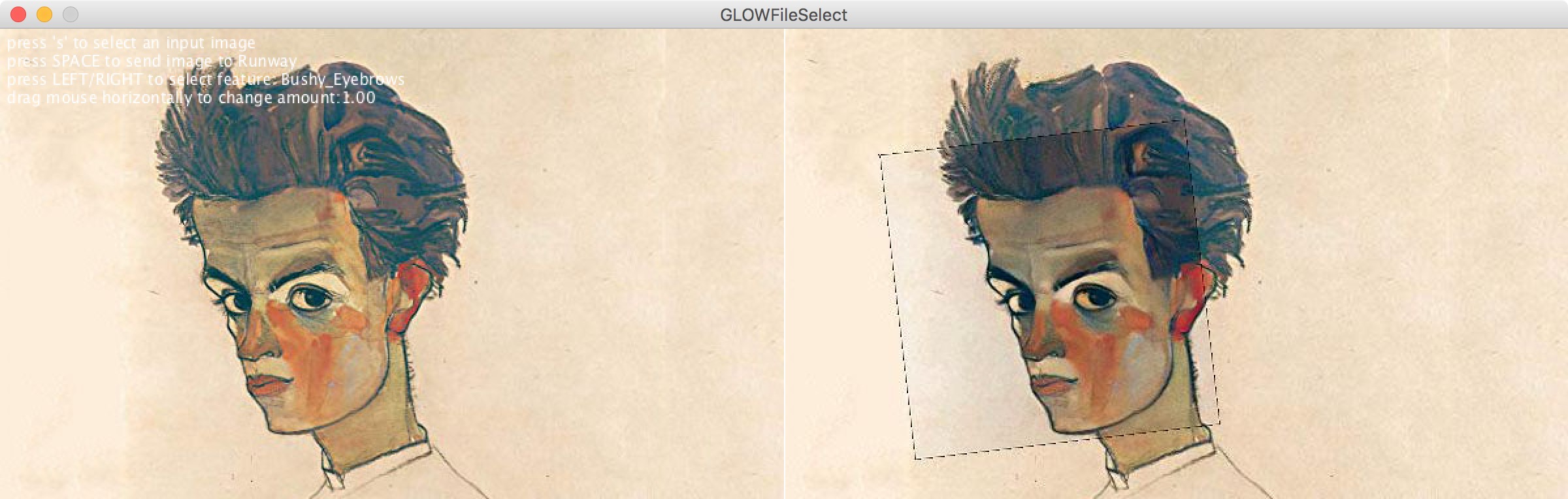

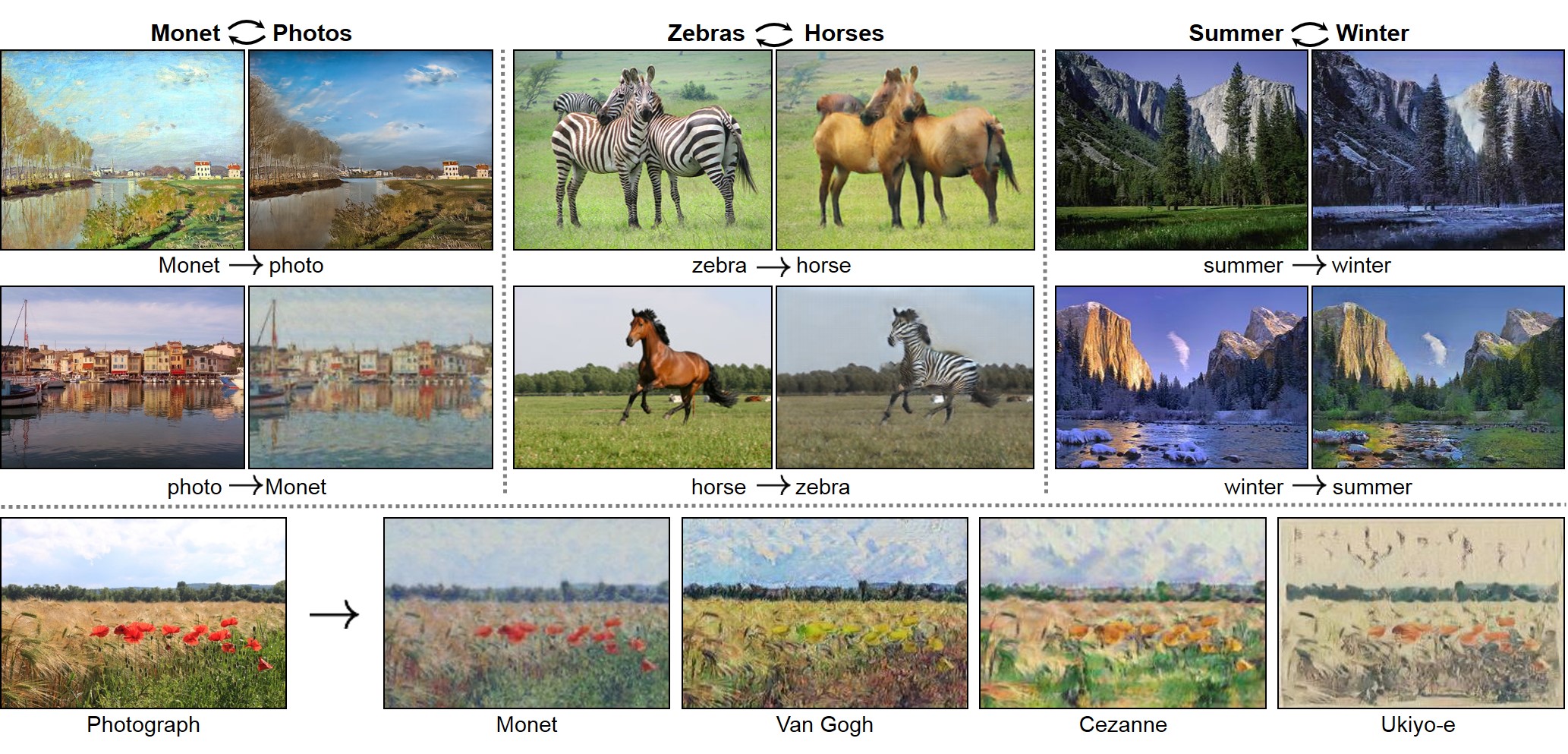

This year alone has seen unprecedented leaps in the area of learning-based image translation, namely CycleGAN, by Zhuet al. But experiments so far have been tailored to merely two domains at a time, and scaling them to more would re-quire an quadratic number of models to be trained. And with two-domain models taking days to train on current hardware,the number of domains quickly becomes limited by the time and resources required to process them. In this paper, we pro-pose a multi-component image translation model and training scheme which scales linearly - both in resource consumption and time required - with the number of domains. We demonstrate its capabilities on a dataset of paintings by 14different artists and on images of the four different seasons in the Alps. Note that 14 data groups would need(14choose2) =91 different CycleGAN models: a total of 182 genera-tor/discriminator pairs; whereas our model requires only 14generator/discriminator pairs

UNIT: UNsupervised Image-to-image Translation Networks : https://github.com/mingyuliutw/UNIT

Utility library to easily connect to RunwayML from Processing

Feel free to replace this paragraph with a description of the Library.

Contributed Libraries are developed, documented, and maintained by members of the Processing community. Further directions are included with each Library. For feedback and support, please post to the Discourse. We strongly encourage all Libraries to be open source, but not all of them are.

https://github.com/runwayml/processing-library

Installation

Download https://github.com/runwayml/processing-library/releases/download/latest/RunwayML.zip

Unzip into Documents > Processing > libraries

Restart Processing (if it was already running)

Create beautiful, wild and weird images with GAN.

A light Rust API for Multiresolution Stochastic Texture Synthesis [1], a non-parametric example-based algorithm for image generation.

![]()

Pixel-art scaling algorithms are graphical filters that are often used in video game emulators to enhance hand-drawn 2D pixel art graphics. The re-scaling of pixel art is a specialist sub-field of image rescaling.

As pixel-art graphics are usually in very low resolutions, they rely on careful placing of individual pixels, often with a limited palette of colors. This results in graphics that rely on a high amount of stylized visual cues to define complex shapes with very little resolution, down to individual pixels. This makes image scaling of pixel art a particularly difficult problem.

A number of specialized algorithms[1] have been developed to handle pixel-art graphics, as the traditional scaling algorithms do not take such perceptual cues into account.

Since a typical application of this technology is improving the appearance of fourth-generation and earlier video games on arcade and console emulators, many are designed to run in real time for sufficiently small input images at 60 frames per second. This places constraints on the type of programming techniques that can be used for this sort of real-time processing. Many work only on specific scale factors: 2× is the most common, with 3×, 4×, 5× and 6× also present.

Plugin for GIMP : https://github.com/bbbbbr/gimp-plugin-pixel-art-scalers

Waifu2x

https://en.wikipedia.org/wiki/Waifu2x

https://github.com/lltcggie/waifu2x-caffe/releases

https://github.com/imPRAGMA/W2XKit

https://old.reddit.com/r/WaifuUpscales/new/

https://github.com/BlueCocoa/waifu2x-ncnn-vulkan-macos/releases

https://old.reddit.com/r/Dandere2x/

https://old.reddit.com/r/waifu2x

https://github.com/AaronFeng753/Waifu2x-Extension

https://github.com/K4YT3X/video2x

https://old.reddit.com/r/AnimeResearch

Quote from a reddit comment :

A short list, ordered after output quality and setup time:

SRGAN, Super-resolution generative adversarial network : https://github.com/topics/srgan,

Other implementations: https://github.com/tensorlayer/srgan

https://github.com/brade31919/SRGAN-tensorflow

https://github.com/titu1994/Super-Resolution-using-Generative-Adversarial-Networks

Neural Enhance: https://github.com/alexjc/neural-enhance/

Photoshop: The newest PS version (19.x, since October 2017 release) also has a new upscaling method, called "Preserve Details 2.0 Upscale" but compared to SRGAN the results clearly lack sharp and fine details. You have asked for an App and PS is easy to use and can be automated.

Overview of the most popular algorithms:

https://github.com/IvoryCandy/super-resolution

(VDSR, EDSR, DCRN, SubPixelCNN, SRCNN, FSRCNN, SRGAN)

Not in the list above:

LapSRN: https://github.com/phoenix104104/LapSRN

SelfExSR: https://github.com/jbhuang0604/SelfExSR

RAISR, developed by Google:

https://github.com/MKFMIKU/RAISR

https://github.com/movehand/raisr

Despite the rise of ebooks, the interest in cover design and the look of physical books is probably stronger than ever. The rate of books being published grows ever higher, and they all need covers, even if it's just a thumbnail for a Kindle edition on Amazon.

Cover designers the world over have access, via online art databases and stock libraries, to a vast array of images that can be used to decorate and, with any luck, sell books. Unfortunately, all those designers tend to have access to the same databases and libraries, which means you sometimes end up with books which feature photographs that look strangely familiar…

Declassifier

Custom Software, COCO Dataset (corrected). 2 days 5 hours 25 min. 2019

Declassifier processes pictures using the YOLO computer vision algorithm. Instead of showing the program's prediction, the picture is overlayed with images from COCO, the training dataset from which the algorithm learned in the first place.

The data by which machine learning algorithms learn to make predictions is hardly ever shown, let alone credited. By doing both, Declassifier exposes the myth of magically intelligent machines, instead applauding the photographers who made the technical achievement possible. In fact, when showing the actual training pictures, credit is not only due but mandatory.

The tl;dr

We should all be automating our image compression.

Image optimization should be automated. It’s easy to forget, best practices change, and content that doesn’t go through a build pipeline can easily slip. To automate: Use imagemin or libvips for your build process. Many alternatives exist.

Most CDNs (e.g. Akamai) and third-party solutions like Cloudinary, imgix, Fastly’s Image Optimizer, Instart Logic’s SmartVision or ImageOptim API offer comprehensive automated image optimization solutions.

The amount of time you’ll spend reading blog posts and tweaking your configuration is greater than the monthly fee for a service (Cloudinary has a free tier). If you don’t want to outsource this work for cost or latency concerns, the open-source options above are solid. Projects like Imageflow or Thumbor enable self-hosted alternatives.

https://ganbreeder.app/i?k=1f98015a7ce950101ec1c5ee

Ganbreeder is a collaborative art tool for discovering images. Images are 'bred' by having children, mixing with other images and being shared via their URL. This is an experiment in using breeding + sharing as methods of exploring high complexity spaces. GAN's are simply the engine enabling this. Ganbreeder is very similar to, and named after, Picbreeder. It is also inspired by an earlier project of mine Facebook Graffiti which demonstrated the creative capacity of crowds. Ganbreeder uses these BigGAN models and the source code is available.

We call them "seeds". Each seed is a machine learning example you can start playing with. Explore, learn and grow them into whatever you like.

It's all a game of construction — some with a brush, some with a shovel, some choose a pen.

Jackson Pollock

…and some, including myself, choose neural networks. I’m an artist, and I've also been building commercial software for a long while. But art and software used to be two parallel tracks in my life; save for the occasional foray into generative art with Processing and computational photography, all my art was analog… until I discovered GANs (Generative Adversarial Networks).

Since the invention of GANs in 2014, the machine learning community has produced a number of deep, technical pieces about the technique (such as this one). This is not one of those pieces. Instead, I want to share in broad strokes some reasons why GANs are excellent artistic tools and the methods I have developed for creating my GAN-augmented art.

https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

https://people.eecs.berkeley.edu/~taesung_park/CycleGAN/datasets/

https://github.com/eriklindernoren/PyTorch-GAN

https://heartbeat.fritz.ai/introduction-to-generative-adversarial-networks-gans-35ef44f21193

https://github.com/nightrome/really-awesome-gan

https://github.com/zhangqianhui/AdversarialNetsPapers

https://github.com/io99/Resources

https://github.com/yunjey/pytorch-tutorial

https://github.com/bharathgs/Awesome-pytorch-list

https://old.reddit.com/r/MachineLearning

http://www.codingwoman.com/generative-adversarial-networks-entertaining-intro/

https://medium.com/@jonathan_hui/gan-gan-series-2d279f906e7b

https://www.youtube.com/channel/UC9OeZkIwhzfv-_Cb7fCikLQ/videos

https://www.youtube.com/watch?list=PLZHQObOWTQDNU6R1_67000Dx_ZCJB-3pi&v=aircAruvnKk

https://www.youtube.com/watch?list=PLxt59R_fWVzT9bDxA76AHm3ig0Gg9S3So&v=ZzWaow1Rvho

Repaint your picture in the style of your favorite artist.

About

Our mission is to provide a novel artistic painting tool that allows everyone to create and share artistic pictures with just a few clicks. All you need to do is upload a photo and choose your favorite style. Our servers will then render your artwork for you. We apply an algorithm developed by Leon Gatys, Alexander Ecker and Matthias Bethge. The website was originally created by Łukasz Kidziński and Michał Warchoł. We have now joined forces to provide you with the latest technology in even more accessible way.

Our Team

Five researchers from the Bethge lab at University of Tübingen (Germany), CHILI Lab at École polytechnique fédérale de Lausanne (Switzerland) and Université catholique de Louvain (Belgium).

The Aziz! Light Crew Freeliner is a live geometric animation software built with Processing. The documentation is a little sparse and the ux is rough but powerfull.

Also known as a!LcFreeliner. This software is feature-full geometric animation software built for live projection mapping. Development started in fall 2013.

It is made with Processing. It is licensed as GNU Lesser General Public License. A official release will occur once I have solidified the new architecture developed during this semester.

Using a computer mouse cursor the user can create geometric forms composed of line segments. These can be created in groups, also known as segmentGroup. To facilitate this task the software has features such as centering, snapping, nudging, fixed length segments, fixed angles, grids, and mouse sensitivity adjustment.

This project will fund the production, via crowd sourcing, of a never-before-released translation of Herman Melville's classic Moby Dick in Japanese emoji icons.

Methodology

Each of Moby Dick's 6,438 sentences will be translated 3 times by different Amazon Mechanical Turk workers. Those results will then be voted on by another set of workers, and the most popular version of each sentence will be selected for inclusion in the book.

Here is a sample of a test run I've done on the first couple of chapters:

In the book, the sentences will be arranged with the Emoji on top of the page and the English sentence at the bottom.

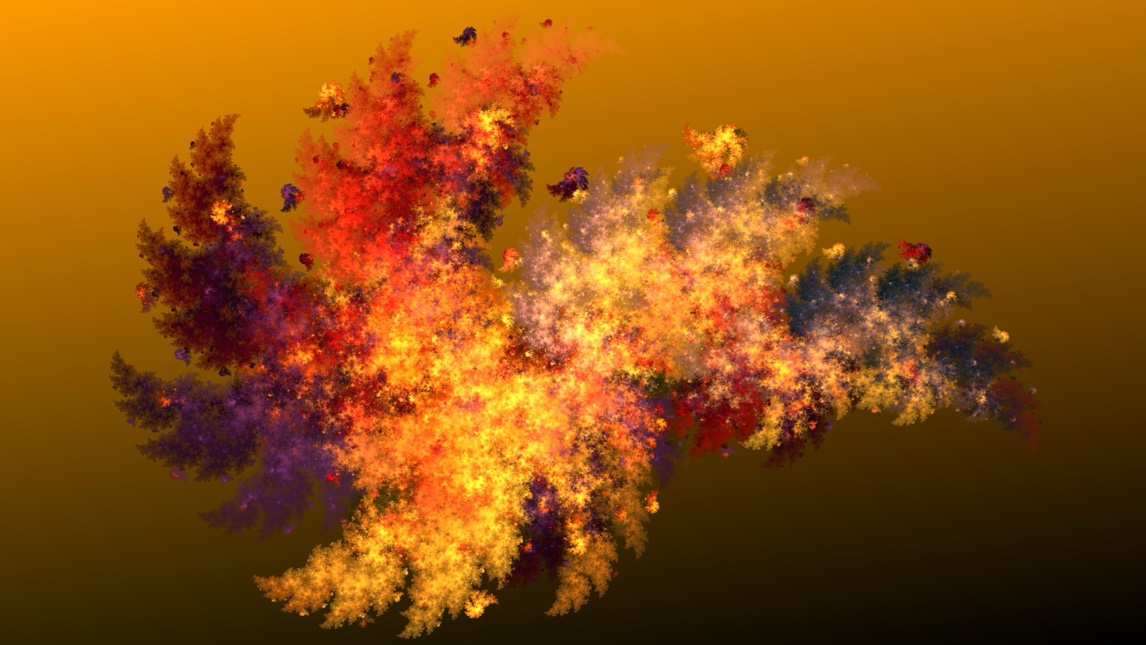

Wildfire is a free and user-friendly image-processing software, mostly known for its sophisticated flame-fractal-generator. It is Java-based, open-source and runs on any major computer-plattform. There is also a special Android-version for mobile devices.

An extensive and extendable painting application with an extensive range of features, including: both bitmap and vector graphics; multiple layers; five kinds of color picker; patterns, textures, and gradients; dashed lines and arrowheads; a spirograph generator; and even a cellular automaton tool (pictured below).

Gifski converts video frames to GIF animations using pngquant's fancy features for efficient cross-frame palettes and temporal dithering. It produces animated GIFs that use thousands of colors per frame.

Release : https://github.com/ImageOptim/gifski/releases

Usage

I haven't finished implementing proper video import yet, so for now you need ffmpeg to convert video to PNG frames first:

ffmpeg -i video.mp4 frame%04d.png

and then make the GIF from the frames:

gifski -o file.gif frame*.png

See gifski -h for more options. The conversion might be a bit slow, because it takes a lot of effort to nicely massage these pixels. Also, you should suffer waiting like the poor users who will be downloading these huge files.