Our wiki is a comprehensive encyclopedia of online and offline aesthetics! We are a community dedicated to the identification, observation, and documentation of visual schemata.

What is an aesthetic? Why does everyone always argue about what aesthetics should be on this wiki?

The short answer: A collection of visual schema that creates a "mood."

Some types of aesthetics include:

Aesthetics originated from Internet communities (Ex: Cottagecore, Dark Academia)

National cultures (Americana, Traditional Polish) Note: Most articles that try to describe a national culture will be deleted. These articles should have a higher quality and risk stereotyping a nation.

Genres of fiction with established visual tropes (Ex: Cyberpunk, Gothic)

Holidays with iconic imagery and colors (Ex: Christmas, Halloween)

Locations that have expected activities, components, and types of people (Ex: Fanfare, Urbancore)

Music genres with consistent visual motifs present in cover art, music videos, etc (Ex: City Pop, Emo)

This does not mean all music genres should be present. For example, Pop and Alternative bands' do not have shared visual traits.

Periods of history with distinct visuals (Ex: Victorian, Y2K)

Stereotypes (Ex: Brocore, VSCO)

Subcultures that share music genres and fashion styles (Ex: Raver, Skinheads)The long answer:

The word "aesthetic" originated as the philosophical discussion about what beauty is, how we should approach it, and why it exists. However, Millennials and Generation Z started using that term as an adjective that describes what they personally consider beautiful. For example: "After Denise finished watching The Virgin Suicides, she said, 'Wow. That was so aesthetic.'"

Aesthetics have now come to mean a collection of images, colors, objects, music, and writings that creates a specific emotion, purpose, and community. It is largely dependent on personal taste, cultural background, and exposure to different pieces of media. This definition is not official and can be debated. There is currently no dictionary definition that captures the complexity of this phenomenon, which arose in the Internet youth. Rather, people who participate in the community "know it when they see it." These elements are constantly debated, as the opinion on whether or not some aesthetics exist or are valid is constantly debated. This is especially true since everyone's own personal life factors into their opinions.

Here is an example of a debate that is going on within the community. Whether or not Lolita is an aesthetic varies on what counts as visual elements. On one hand, lace, petticoats, and bows are valid elements of visual schema. Those elements combine to spark feelings of kawaii, de-sexualization, rebellion, and appreciation of antique. On the other hand, aesthetics are made up of elements other than fashion, such as home decor or music. Fashion is the visual element, rather than the components making up the coord/outfit. That element is part of broader schemas such as Goth and Victorian. What counts as an element and what qualifies as sparking an emotion is a complicated subject.

So right now, the subject is trying to be defined by the community. What either fits into a larger schema or is distinct enough to warrant its own aesthetic is difficult to say and would depend on who you are asking.

Clip retrieval works by converting the text query to a CLIP embedding , then using that embedding to query a knn index of clip image embedddings

https://github.com/rom1504/clip-retrieval

The road to wisdom?

-- Well, it's plain

and simple to express:

Err

and err

and err again

but less

and less

and less.Hence the name LessWrong. We might never attain perfect understanding of the world, but we can at least strive to become less and less wrong each day.

We are a community dedicated to improving our reasoning and decision-making. We seek to hold true beliefs and to be effective at accomplishing our goals. More generally, we work to develop and practice the art of human rationality.[1]

To that end, LessWrong is a place to 1) develop and train rationality, and 2) apply one’s rationality to real-world problems.

“Although our study doesn’t present ways to mitigate negative hunger-induced emotions, research suggests that being able to label an emotion can help people to regulate it, such as by recognising that we feel angry simply because we are hungry. Therefore, greater awareness of being ‘hangry’ could reduce the likelihood that hunger results in negative emotions and behaviours in individuals.”

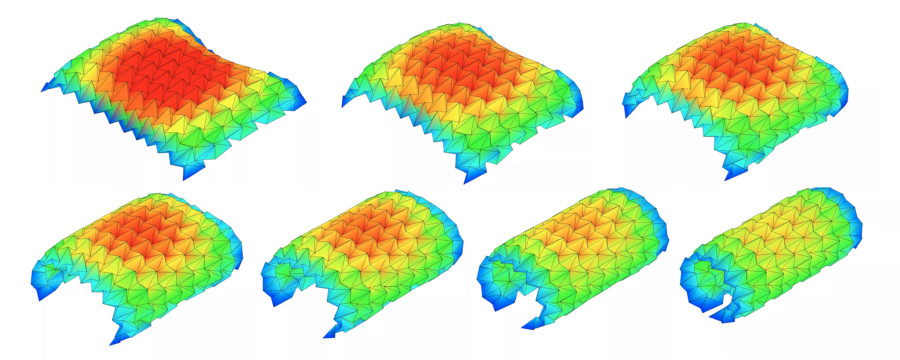

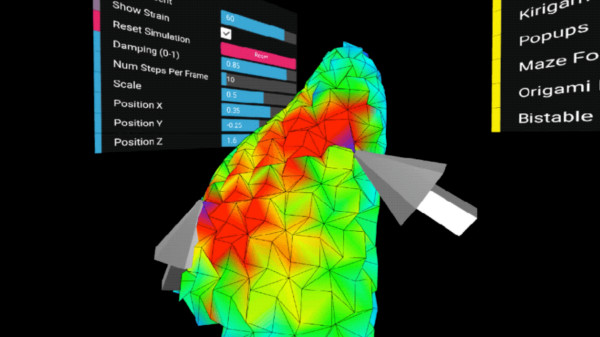

This app allows you to simulate how any origami crease pattern will fold. It may look a little different from what you typically think of as "origami" - rather than folding paper in a set of sequential steps, this simulation attempts to fold every crease simultaneously. It does this by iteratively solving for small displacements in the geometry of an initially flat sheet due to forces exerted by creases. You can read more about it in our paper:

Fast, Interactive Origami Simulation using GPU Computation by Amanda Ghassaei, Erik Demaine, and Neil Gershenfeld (7OSME)

All simulation methods were written from scratch and are executed in parallel in several GPU fragment shaders for fast performance. The solver extends work from the following sources:

Origami Folding: A Structural Engineering Approach by Mark Schenk and Simon D. Guest

Freeform Variations of Origami by Tomohiro Tachi

This app also uses the methods described in Simple Simulation of Curved Folds Based on Ruling-aware Triangulation to import curved crease patterns and pre-process them in a way that realistically simulates the bending between the creases.

Originally built by Amanda Ghassaei as a final project for Geometric Folding Algorithms. Other contributors include Sasaki Kosuke, Erik Demaine, and others. Code available on Github. If you have interesting crease patterns that would make good demo files, please send them to me (Amanda) so I can add them to the Examples menu.

https://cuttle.xyz/@forresto/Origami-simulator-tips-W4lDXuB5m0xh

https://nitter.42l.fr/kellianderson/status/1454871569981902848

Bitmap Image to 'Pixel Perfect' Vector Graphic or 3D model

The HTML5 application on this page converts your bitmap image online into a Scalable Vector Graphics or 3D model.

The result is 'pixel perfect'/lossless.

Hydra is a platform for live coding visuals, in which each connected browser window can be used as a node of a modular and distributed video synthesizer.

Built using WebRTC (peer-to-peer web streaming) and WebGL, hydra allows each connected browser/device/person to output a video signal or stream, and receive and modify streams from other browsers/devices/people. The API is inspired by analog modular synthesis, in which multiple visual sources (oscillators, cameras, application windows, other connected windows) can be transformed, modulated, and composited via combining sequences of functions.

Features:

Written in javascript and compatible with other javascript libraries

Available as a platform as well as a set of standalone modules

Cross-platform and requires no installation (runs in the browser)

Also available as a package for live coding from within atom text editor

Experimental and forever evolving !!

Conceptual artist exploring our use of technology and its ethical and aesthetic implications.

_

[EN] Filipe Vilas-Boas was born in Portugal in 1981. He is a self-taught conceptual artist currently living and working in Paris. Without being a naive tech utopist or a reluctant technophobe, he explores our use of technology and its ethical and aesthetic implications. His installations, performances and conceptual artworks question the global digitalization of our societies, mostly by merging our physical (IRL) and digital (URL) worlds.

His works were highlighted in the Portuguese Emerging Art Books, 2018 & 2019 Edition and have been shown internationally notably at Nuit Blanche Paris, the UNESCO, Biennale Siana, Le Cube, The French Ministry of Culture, Biennale Némo - Le 104 (FR), Athens Digital Art Festival, Monitor - Heraklion Contemporary Arts Festival (GR), Zaratan, MAAT Museum (PT) and at the Tate Modern (UK).

20 alternative interfaces for creating and editing images and text

https://github.com/constraint-systems

Flow

An experimental image editor that lets you set and direct pixel-flows.

Fracture

Shatter and recombine images using a grid of viewports.

Tri

Tri is an experimental image distorter. You can choose an image to render using a WebGL quad, adjust the texture and position coordinates to create different distortions, and save the result.

Tile

Layout images using a tiling tree layout. Move, split, and resize images using keyboard controls.

Sift

Slice an image into multiple layers. You can offset the slices to create interference patterns and pseudo-3D effects.

Automadraw

Draw and evolve your drawing using cellular automata on a pixel grid with two keyboard-controlled cursors.

Span

Lay out and rearrange text, line by line, using keyboard controls.

Stamp

Image-paint from a source image palette using keyboard controls.

Collapse

Collapse an image into itself using ranked superpixels.

Res

Selectively pixelate an image using a compression algorithm.

Rgb

Pixel-paint using keyboard controls.

Face

Edit both the text and the font it is rendered in.

Pal

Apply an eight-color terminal color scheme to an image. Use the keyboard controls to choose a theme, set thresholds, and cycle hues.

Bix

Draw on binary to glitch text.

Diptych

Pixel-reflow an image to match the dimensions of your text. Save the result as a diptych.

Slide

Divide and slide-stretch an image using keyboard controls.

Freeconfig

Push around image pixels in blocks.

Moire

Generate angular skyscapes using Asteroids-like ship controls.

Hex

A keyboard-driven, grid-based drawing tool.

Etch

A keyboard-based pixel drawing tool.

About

Constraint Systems is a collection of experimental web-based creative tools. They are an ongoing attempt to explore alternative ways of interacting with pixels and text on a computer screen. I hope to someday build these ideas into something larger, but the plan for now is to keep the scopes small and the releases quick.

New Art City’s mission is to develop an accessible toolkit for building virtual installations that show born-digital artifacts alongside digitized works of traditional media.

Our curation and product design prioritize those who are disadvantaged by structural injustice. An inclusive and redistributive community is as important to our project as the toolkit itself.

Web scraping describes techniques for automatically downloading and processing web content, or converting online text and other media into structured data that can then be used for various purposes. In short, the user writes a program to browse and analyze the web on their behalf, rather than doing so manually. This is a common practice in silicon valley, where open html pages are transformed into private property: Facebook began as a (horny) web scraping project, as did Google and all other search engines. Web scraping is also frequently used to acquire the massive datasets needed to train machine learning models, and has become an important research tool in fields such as journalism and sociology.

I define "scrapism" as the practice of web scraping for artistic, emotional, and critical ends. It combines aspects of data journalism, conceptual art, and hoarding, and offers a methodology to make sense of a world in which everything we do is mediated by internet companies. These companies surveill us, vacuum up every trace we leave behind, exploit our experiences and interject themselves into every possible moment. But in turn they also leave their own traces online, traces which when collected, filtered, and sorted can reveal (and possibly even alter) power relations. The premise of scrapism is that everything we need to know about power is online, hiding in plain sight.

This is a work-in-progress guide to web scraping as an artistic and critical practice, created by Sam Lavigne. I will be updating it over the coming months! I'll also be doing occasional live demos either on Twitch or YoutTube.

Orca is an esoteric programming language designed by @hundredrabbits to create procedural sequencers.

This playground lets you use Orca and its companion app Pilot directly in the browser and allows you to publish your creations by sharing their URL.

Originally captured as the medium for Ed Ruscha’s creative work, the more than 65,000 photographs selected from this archive present a unique view of one of Los Angeles’ quintessential streets, Sunset Boulevard, and how it has changed over the past 50 years. Ed Ruscha, with help from Getty and Stamen Design, is making this amazing collection accessible to you: explore his images of Sunset and discover your own story of Los Angeles.

Comprehensive overview of existing tools, strategies and thoughts on interacting with your data

TLDR: when I read I try to read actively, which for me mainly involves using various tools to annotate content: highlight and leave notes as I read. I've programmed data providers that parse them and provide nice interface to interact with this data from other tools. My automated scripts use them to render these annotations in human readable and searchable plaintext and generate TODOs/spaced repetition items.

In this post I'm gonna elaborate on all of that and give some motivation, review of these tools (mainly with the focus on open source thus extendable software) and my vision on how they could work in an ideal world. I won't try to convince you that my method of reading and interacting with information is superior for you: it doesn't have to be, and there are people out there more eloquent than me who do that. I assume you want this too and wondering about the practical details.

This is an interactive editor for making face filters with WebGL.

The language below is called GLSL, you can edit it to change the effect.

a project to excavate shut down, abandoned web ruins and restore them to surfable, accessible, searchable, remixable condition

somewhere between a library and a living museum, we're working on experimental new ways to close the gap between archival and visibility of the web that was lost

launched

geocities

myspace musicon deck

aol hometown

netscape web sites

geocities japan

FortuneCity

tba

This is a directory of 249 links in 73 categories.

This directory is somewhat inspired by the old, failed link collections like the original Yahoo! and DMOZ. They were terrible—you couldn’t find anything, but what you did find was often unexpected. My ‘archivist’/‘forager’ tendencies want to do this.

Linking has kind of died in the wild. Google views a site like this as a link farm—so, directories have died off. Yeah, well, I find many of the ‘link farms’ in my Web/Directory list to be immensely ‘great’ and ‘satisfying’. More than anything, I hope mine intrigues you to build your own. This directory forms my connection to the rest of society.

I reserve the right to link to dipshits and crazies. I link to what piques my curiosity, what amazes me or what horrifies me. This includes you. (You know you want to participate.)

You might also look at it like: maybe I’ve friended these links. But instead of putting them in a big number that represents my friends—my 249 friends, you see—I list my friends out neatly and try to coax you to meet them.

Perhaps there is no need for friending. For likes. For upvotes. For hashtags. For boosts. For trending. For rank. For followers. For an algorithm.

Perhaps plain ole linking—and spending time telling you why I linked—is good enough, was always good enough. Perhaps it’s superior!

No Home Like Place Airbnb is a global hotel filled with the same recurring items. Bed, chair, potted plant, all catered to our cosmopolitan sensibilities. We end up in a place that's completely interchangeable; a room is a room is a room. An algorithm finds these recurring items and replaces them with the same items from other listings.

Audio stream : http://icecast.spc.org:8000/longplayer

Longplayer is a one thousand year long musical composition. It began playing at midnight on the 31st of December 1999, and will continue to play without repetition until the last moment of 2999, at which point it will complete its cycle and begin again. Conceived and composed by Jem Finer, it was originally produced as an Artangel commission, and is now in the care of the Longplayer Trust.

How does Longplayer work?

Early calculations made while trying to establish the correct increments. At the bottom is an estimation of the playing positions on the 7th of January 2000 based on these values.

The composition of Longplayer results from the application of simple and precise rules to six short pieces of music. Six sections from these pieces – one from each – are playing simultaneously at all times. Longplayer chooses and combines these sections in such a way that no combination is repeated until exactly one thousand years has passed. At this point the composition arrives back at the point at which it first started. In effect Longplayer is an infinite piece of music repeating every thousand years – a millennial loop.

The six short pieces of music are transpositions of a 20’20” score for Tibetan Singing Bowls, the ‘source music’.[1] These transpositions vary from the original not only in pitch but also, proportionally, in duration.[2]

Every two minutes a starting point in each of the six pieces is calculated, from which they then play for the next two minutes. Each starting point is calculated by adding a specific length of time to its previous starting point.[3] For each of the six pieces of music this length of time is unique and unvarying. The relationships between these six precisely calculated increments are what gives Longplayer its exact one thousand year long duration.

Rates of Change

In the diagram below, the six simultaneous transpositions are represented by the six circles, whose circumference represents the length of the transposed source music. The solid rectangles represent the two minute sections presently playing. The unique increments by which these six sections advance determine their respective rates of change. These reflect different flows of time, from a glacial crawl to the almost perceptible sweep of an hour hand. The incremental advance of the third circle, is so small that it will take the full thousand years for it to pass once through the source music. Conversely the increment for the second circle is such that it makes its way through the music every 3.7 days. The diagram updates every 2 minutes

https://eclipticalis.com/

http://teropa.info/loop

https://daily.bandcamp.com/lists/generative-music-guide

https://github.com/npisanti/ofxPDSP

Today, you are an Astronaut. You are floating in inner space 100 miles above the surface of Earth. You peer through your window and this is what you see. You are people watching. These are fleeting moments.

These videos come from YouTube. They were uploaded in the last week and have titles like DSC 1234 and IMG 4321. They have almost zero previous views. They are unnamed, unedited, and unseen (by anyone but you).

Astronaut starts when you press GO. The video switches periodically. Click the button below the video to prevent the video from switching.

Astronaut was created by Andrew Wong and James Thompson on a sunny day in San Francisco in 2011.

Beautiful footage of our earth is provided by the Earth Science and Remote Sensing Unit, NASA Johnson Space Center.

Soundtrack provided by Claude Debussy's Claire de Lune performed by Caela Harrison (cc).

Try pressing spacebar.