Have you ever wanted to ...

– export 10,000 mass-customized copies of your InDesign document?

– use spatial-tiling algorithms to create your layouts?

– pass real-time data from any source directly into your InDesign project?

– create color palettes based on algorithms?

– or simply reconsider what print can be?

basil.js is ...

– making scripting in InDesign available to designers and artists.

– in the spirit of Processing and easy to learn.

– based on JavaScript and extends the existing API of InDesign.

– a project by The Basel School of Design in Switzerland.

– has been released as open source in February 2013!

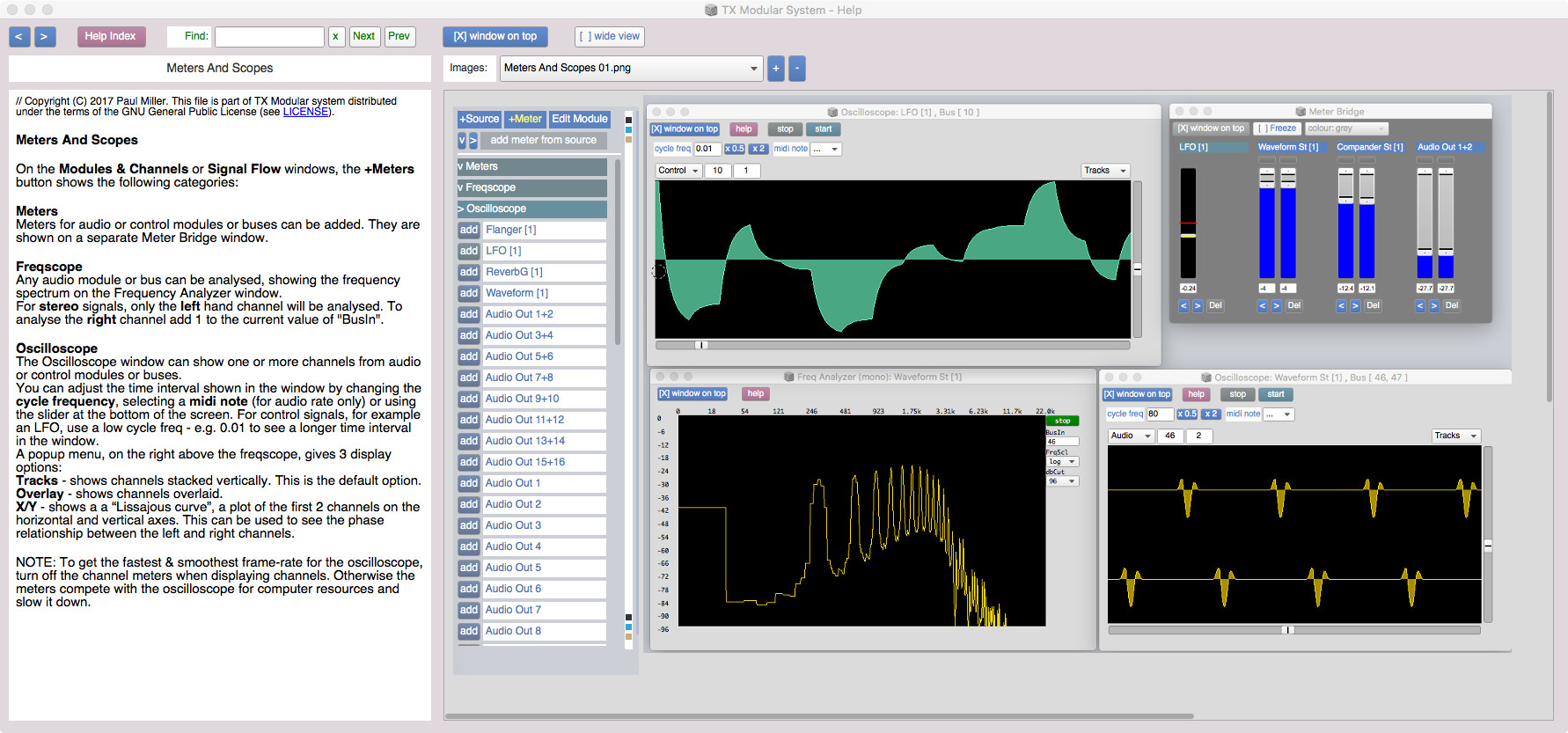

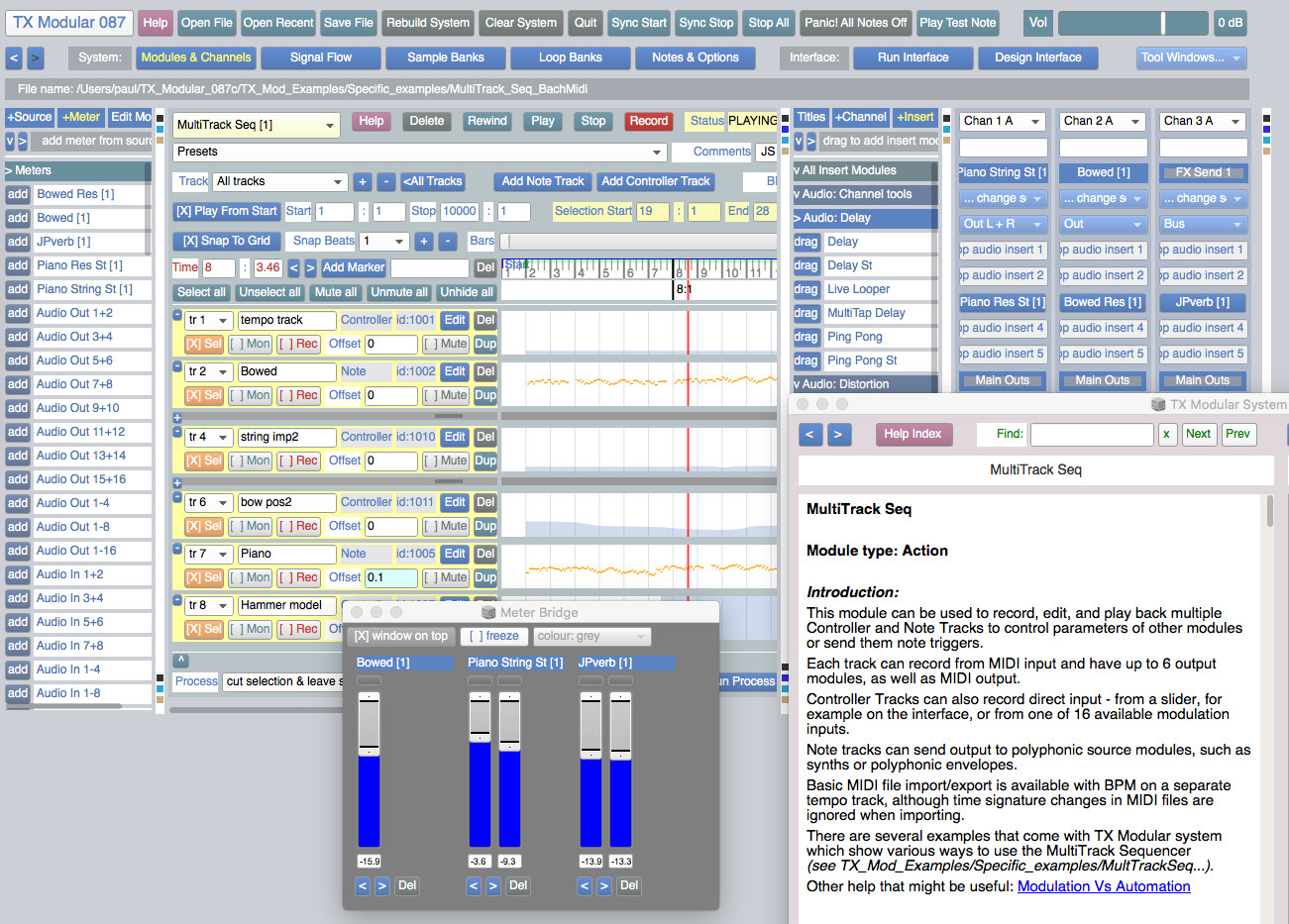

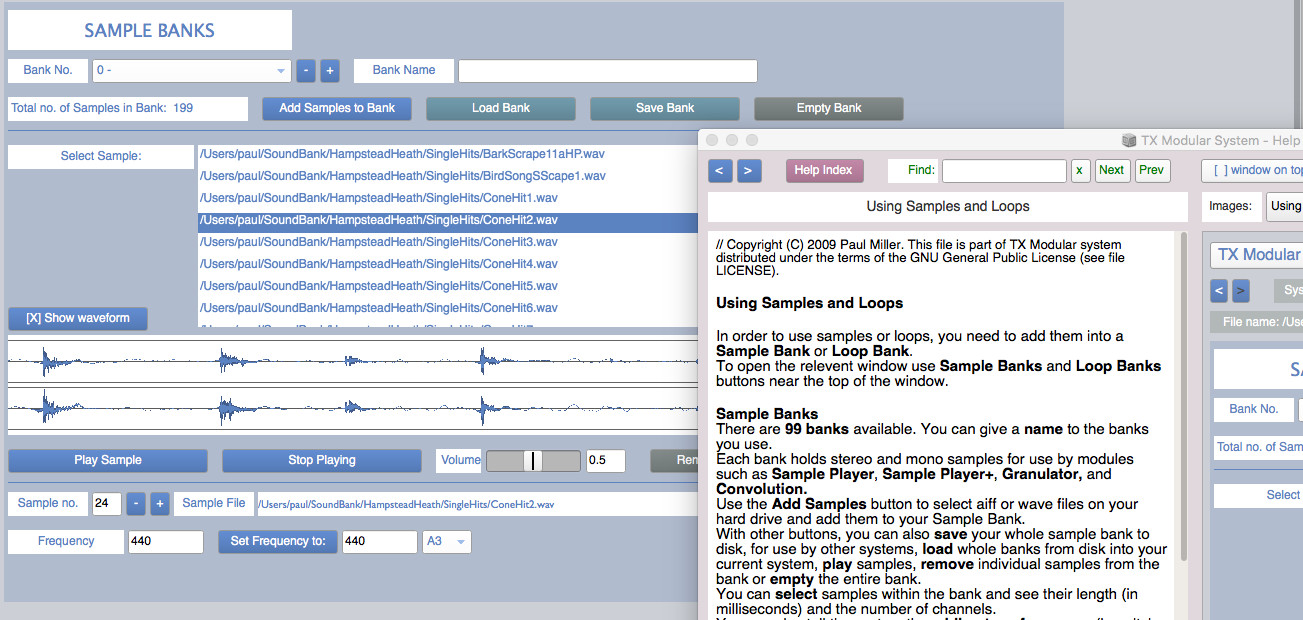

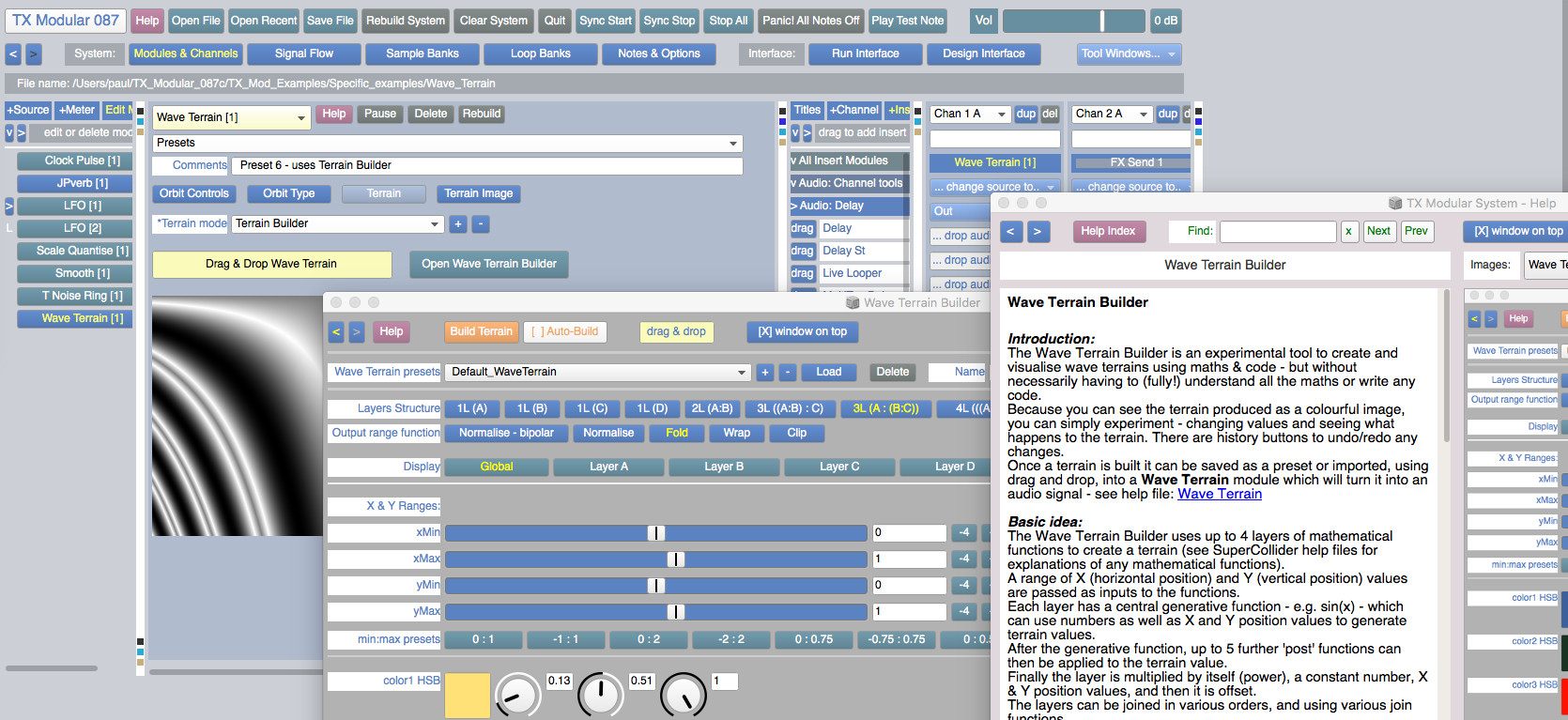

The TX Modular System is open source audio-visual software for modular synthesis and video generation, built with SuperCollider (https://supercollider.github.io) and openFrameworks (https://openFrameworks.cc).

It can be used to build interactive audio-visual systems such as: digital musical instruments, interactive generative compositions with real-time visuals, sound design tools, & live audio-visual processing tools.

This version has been tested on MacOS (0.10.11) and Windows (10). The audio engine should also work on Linux.

The visual engine, TXV, has only been built so far for MacOS and Windows - it is untested on Linux.

The current TXV MacOS build will only work with Mojave (10.14) or earlier (10.11, 10.12 & 10.13) - but NOT Catalina (10.15) or later.

You don't need to know how to program to use this system. But if you can program in SuperCollider, some modules allow you to edit the SuperCollider code inside - to generate or process audio, add modulation, create animations, or run SuperCollider Patterns.

Systems music is an idea that explores the following question: What if we could, instead of making music, design systems that generate music for us?

This idea has animated artists and composers for a long time and emerges in new forms whenever new technologies are adopted in music-making.

In the 1960s and 70s there was a particularly fruitful period. People like Steve Reich, Terry Riley, Pauline Oliveros, and Brian Eno designed systems that resulted in many landmark works of minimal and ambient music. They worked with the cutting edge technologies of the time: Magnetic tape recorders, loops, and delays.

Today our technological opportunities for making systems music are broader than ever. Thanks to computers and software, they're virtually endless. But to me, there is one platform that's particularly exciting from this perspective: Web Audio. Here we have a technology that combines audio synthesis and processing capabilities with a general purpose programming language: JavaScript. It is a platform that's available everywhere — or at least we're getting there. If we make a musical system for Web Audio, any computer or smartphone in the world can run it.

With Web Audio we can do something Reich, Riley, Oliveros, and Eno could not do all those decades ago: They could only share some of the output of their systems by recording them. We can share the system itself. Thanks to the unique power of the web platform, all we need to do is send a URL.

In this guide we'll explore some of the history of systems music and the possibilities of making musical systems with Web Audio and JavaScript. We'll pay homage to three seminal systems pieces by examining and attempting to recreate them: "It's Gonna Rain" by Steve Reich, "Discreet Music" by Brian Eno, and "Ambient 1: Music for Airports", also by Brian Eno.

Table of Contents

"Is This for Me?"

How to Read This Guide

The Tools You'll Need

Steve Reich - It's Gonna Rain (1965)

Setting Up itsgonnarain.js

Loading A Sound File

Playing The Sound

Looping The Sound

How Phase Music Works

Setting up The Second Loop

Adding Stereo Panning

Putting It Together: Adding Phase Shifting

Exploring Variations on It's Gonna Rain

Brian Eno - Ambient 1: Music for Airports, 2/1 (1978)

The Notes and Intervals in Music for Airports

Setting up musicforairports.js

Obtaining Samples to Play

Building a Simple Sampler

A System of Loops

Playing Extended Loops

Adding Reverb

Putting It Together: Launching the Loops

Exploring Variations on Music for Airports

Brian Eno - Discreet Music (1975)

Setting up discreetmusic.js

Synthesizing the Sound Inputs

Setting up a Monophonic Synth with a Sawtooth Wave

Filtering the Wave

Tweaking the Amplitude Envelope

Bringing in a Second Oscillator

Emulating Tape Wow with Vibrato

Understanding Timing in Tone.js

Transport Time

Musical Timing

Sequencing the Synth Loops

Adding Echo

Adding Tape Delay with Frippertronics

Controlling Timbre with a Graphic Equalizer

Setting up the Equalizer Filters

Building the Equalizer Control UI

Going Forward

We call them "seeds". Each seed is a machine learning example you can start playing with. Explore, learn and grow them into whatever you like.

An open source collection of 20+ computational design tools for Clojure & Clojurescript by Karsten Schmidt.

In active development since 2012, and totalling almost 39,000 lines of code, the libraries address concepts related to many displines, from animation, generative design, data analysis / validation / visualization with SVG and WebGL, interactive installations, 2d / 3d geometry, digital fabrication, voxel modeling, rendering, linked data graphs & querying, encryption, OpenCL computing etc.

Many of the thi.ng projects (especially the larger ones) are written in a literate programming style and include extensive documentation, diagrams and tests, directly in the source code on GitHub. Each library can be used individually. All projects are licensed under the Apache Software License 2.0.

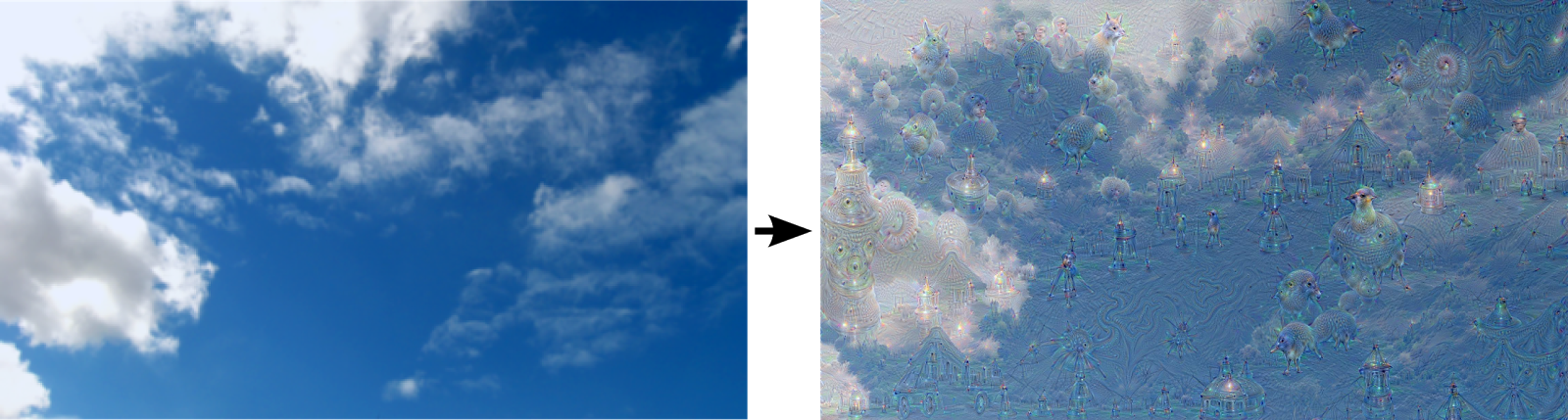

Artificial Neural Networks have spurred remarkable recent progress in image classification and speech recognition. But even though these are very useful tools based on well-known mathematical methods, we actually understand surprisingly little of why certain models work and others don’t. So let’s take a look at some simple techniques for peeking inside these networks.

We train an artificial neural network by showing it millions of training examples and gradually adjusting the network parameters until it gives the classifications we want. The network typically consists of 10-30 stacked layers of artificial neurons. Each image is fed into the input layer, which then talks to the next layer, until eventually the “output” layer is reached. The network’s “answer” comes from this final output layer.

One of the challenges of neural networks is understanding what exactly goes on at each layer. We know that after training, each layer progressively extracts higher and higher-level features of the image, until the final layer essentially makes a decision on what the image shows. For example, the first layer maybe looks for edges or corners. Intermediate layers interpret the basic features to look for overall shapes or components, like a door or a leaf. The final few layers assemble those into complete interpretations—these neurons activate in response to very complex things such as entire buildings or trees.

One way to visualize what goes on is to turn the network upside down and ask it to enhance an input image in such a way as to elicit a particular interpretation. Say you want to know what sort of image would result in “Banana.” Start with an image full of random noise, then gradually tweak the image towards what the neural net considers a banana (see related work in [1], [2], [3], [4]). By itself, that doesn’t work very well, but it does if we impose a prior constraint that the image should have similar statistics to natural images, such as neighboring pixels needing to be correlated.

Okapi is an open-source framework for building digital, generative art in HTML5.

Links to generative artworks

Experiments and explorations of algorithms

Blog of marius waltz

His works, processing and others

Open source creative software catalog

The generative art resource