When computer software automatically generates output that is not identical to its owntext, some of which is potentially copyrightable and some of which is not, difficult problemsarise in deciding to whom ownership rights in the output should be allocated. Applying thetraditional authorship tests of copyright law does not yield a clear solution to this problem. Inthis Article, Professor Samuelson argues that allocating rights in computer-generated outputto the user of the generator program is the soundest solution to the dilemma because it is theone most compatible with traditional doctrine and the policies that underlie copyright law.

Artificial Neural Networks have spurred remarkable recent progress in image classification and speech recognition. But even though these are very useful tools based on well-known mathematical methods, we actually understand surprisingly little of why certain models work and others don’t. So let’s take a look at some simple techniques for peeking inside these networks.

We train an artificial neural network by showing it millions of training examples and gradually adjusting the network parameters until it gives the classifications we want. The network typically consists of 10-30 stacked layers of artificial neurons. Each image is fed into the input layer, which then talks to the next layer, until eventually the “output” layer is reached. The network’s “answer” comes from this final output layer.

One of the challenges of neural networks is understanding what exactly goes on at each layer. We know that after training, each layer progressively extracts higher and higher-level features of the image, until the final layer essentially makes a decision on what the image shows. For example, the first layer maybe looks for edges or corners. Intermediate layers interpret the basic features to look for overall shapes or components, like a door or a leaf. The final few layers assemble those into complete interpretations—these neurons activate in response to very complex things such as entire buildings or trees.

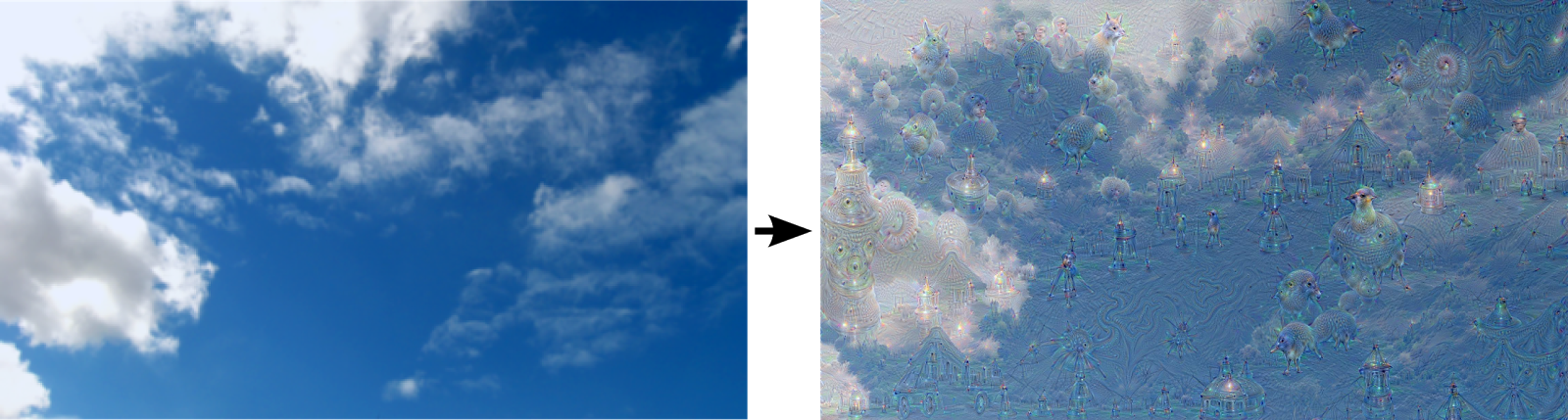

One way to visualize what goes on is to turn the network upside down and ask it to enhance an input image in such a way as to elicit a particular interpretation. Say you want to know what sort of image would result in “Banana.” Start with an image full of random noise, then gradually tweak the image towards what the neural net considers a banana (see related work in [1], [2], [3], [4]). By itself, that doesn’t work very well, but it does if we impose a prior constraint that the image should have similar statistics to natural images, such as neighboring pixels needing to be correlated.

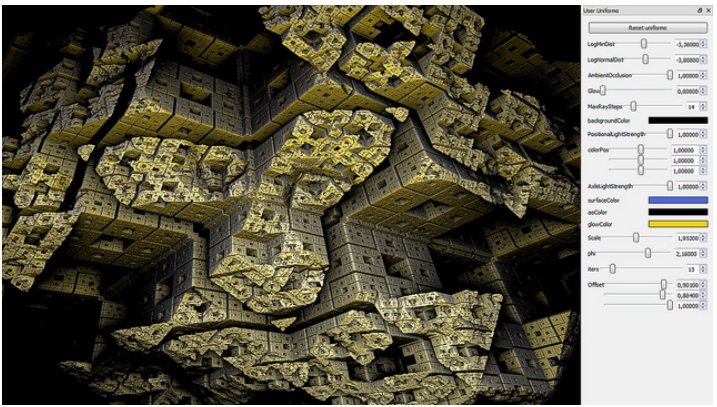

Fragmentarium is an open source, cross-platform IDE for exploring pixel based graphics on the GPU. It is inspired by Adobe's Pixel Bender, but uses GLSL, and is created specifically with fractals and generative systems in mind.

Born in 1982. His works, centralising in real-time processed, computer programmed audio visual installations, have been shown at national and international art exhibitions as well as the Media Art Festivals. He is a recipient of many awards including the Excellence Prize at the Japan Media Art Festival in 2004, and the Award of Distinction at Prix Ars Electronica in 2008. Having been involved in a wide range of activities, he has worked on a concert piece production for Ryoji Ikeda, collaborated with Yoshihide Otomo, Yuki Kimura and Benedict Drew, participated in the Lexus Art Exhibition at Milan Design week. and has started live performance as Typingmonkeys.

Stripgenerator is free of charge project created to embrace the internet blogging and strip creation culture, helping the people with no drawing abilities to express their opinions via strips. Yeah, I am one of them as well.

The ad generator is a generative artwork that explores how advertising uses and manipulates language. Words and semantic structures from real corporate slogans are remixed and randomized to generate invented slogans.